Waist-up protection for blind individuals using the EyeCane as a primary and secondary mobility aid

Abstract

Background: One of the most stirring statistics in relation to the mobility of blind individuals is the high rate of upper body injuries, even when using the white-cane.

Objective: We here addressed a rehabilitation- oriented challenge of providing a reliable tool for blind people to avoid waist-up obstacles, namely one of the impediments to their successful mobility using currently available methods (e.g., white-cane).

Methods: We used the EyeCane, a device we developed which translates distances from several angles to haptic and auditory cues in an intuitive and unobtrusive manner, serving both as a primary and secondary mobility aid. We investigated the rehabilitation potential of such a device in facilitating visionless waist-up body protection.

Results: After ∼5 minutes of training with the EyeCane blind participants were able to successfully detect and avoid obstacles waist-high and up. This was significantly higher than their success when using the white-cane alone. As avoidance of obstacles required participants to perform an additional cognitive process after their detection, the avoidance rate was significantly lower than the detection rate.

Conclusion: Our work has demonstrated that the EyeCane has the potential to extend the sensory world of blind individuals by expanding their currently accessible inputs, and has offered them a new practical rehabilitation tool.

1Introduction

Imagine yourself walking down the street while texting, when suddenly your head collides with an unnoticed tree branch. Although there is an increase in such incidents (Nasar & Troyer, 2013), the occurrence of such instances for sighted people is lower than those occurring to blind and visually impaired individuals who are even more vulnerable to such collisions, as also when they are in full awareness they cannot notice such obstructions. Such accidents have occurred to 88% of blind individuals who participated in a survey regarding accidents experienced by people with visual impairments over the course of their life (Manduchi, 2011). Additionally, 18% of them reported such an injury occurring at least once a month, and 23% reported the need for significant medical attention due to such anincident. The common mobility aids for blind and visually impaired individuals, aimed at facilitating independent mobility, are the white-cane (Hoover, 1950; Rodgers & Emerson, 2005) and a guide-dog (Jacobson & H., 2010; Knol, Roozendaal, van den Bogaard, & Bouw, 1988; Küpfer, 1992). These aids indeed assist in navigation and avoidance of obstacles whose height ranges from the waist and down, thus providing protection to the users’ lower-body, but users’ upper-body is left vulnerable to collisions with many obstacles (Manduchi, 2011). Various devices, primary (i.e. devices which are used as stand-alone mobility aids), or secondary- (i.e. devices which are used to supplement primary devices) (Hersh & Johnson, 2008), were developed in the attempt to tackle this issue and provide blind and visually impaired individuals with upper body protection. For instance, the UltraCane (Penrod, Corbett, & Blasch, 2005), a primary mobility aid, consists of a specialized cane for lower-body safety which has two ultrasonic beams pointing straight ahead and in an angle protecting the upper-body, via haptic cues. The Sonic Pathfinder (La Grow, 1999), a secondary mobility aid, uses a sonar beam which is mounted on the user’s head, thus alerting him via audio cues regarding the existence of obstacles in the height of the waist and up. This device does not provide information regarding obstacles lower than the waist and therefore needs to be coupled with a primary aid such as a white-cane or guide-dog.

To the best of our knowledge, none of these devices has yet been widely accepted by blind and visually impaired users. This is mainly due to their price (Dakopoulos & Bourbakis, 2010; Kim & Cho, 2013), the intense training required for initial use (Nau, Bach, & Fisher, 2013; Strumillo, 2003) and or the cumbersomeness of the devices (Calder, 2010).

Here we attempted to address this upper-body protection challenge with an adapted version of the EyeCane (Buchs, Maidenbaum, & Amedi, 2014; Maidenbaum, Hanassy, et al., 2014; Maidenbaum, Levy-Tzedek, Chebat, & Amedi, 2013). The EyeCane was developed with a special focus on the intuitiveness of its use and quick learning curve, minimizing cost and encumbrance and maximizing technical usability with the goal of increasing real-world use of the device. For the purpose of this study, the pointing direction of the sensors was adapted to provide information regarding obstacles in the height of the waist and up.

In this study, we chose to examine the detection and avoidance of obstacles separately, as there is a significant different between perceiving the existence of an obstacle and using that perceptual knowledge to execute a proper motion to avoid it. This difference is expected to affect the success level of participants in these two tasks, as we would expect the detection level to be higher than the avoidance. The detection level can serve as an estimate of the potential avoidance level participants can reach while using the EyeCane once they gained more experiencewith it.

Additionally, in real-life scenarios, although the avoidance of obstacles is the overall main goal, the detection is important in and of itself. Potentially, this exploration of the environment will also decrease significantly any possible damage as even if avoidance is not perfect, users will be careful after the detection of an obstacle. In addition to this knowledge being crucial for successful avoidance, it provides blind and visually impaired individuals with other possibilities to overcome these obstructions, such as seeking help.

Alongside testing the detection and avoidance of waist-up obstacles with the aid of the EyeCane, we examined the versatility in the usage of this device as a primary device, i.e. stand-alone, or as a secondary device, i.e. mounted on a white-cane. This unique flexibility, which can enable users to choose their preferred setup (switching between setup modes takes only several seconds for a trained individual), in addition to the abovementioned benefits, will potentially increase the actual use of this device in thereal-world.

2Methods

2.1The EyeCane

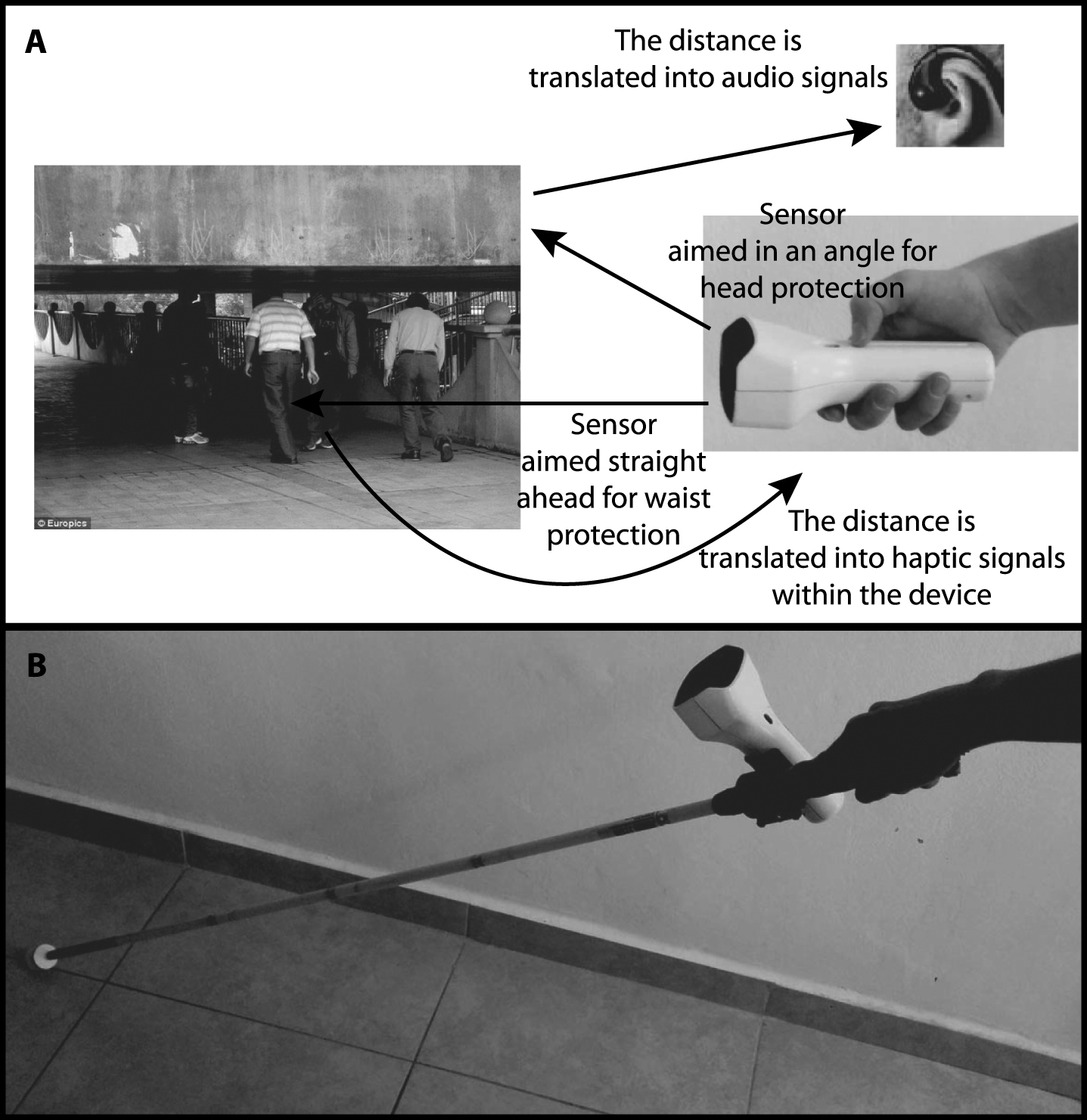

For this experiment, the EyeCane (Buchs et al., 2014; Maidenbaum, Levy-Tzedek, Chebat, Namer-Furstenberg, & Amedi, 2014; Maidenbaum, Hanassy, et al., 2014), a hand-held device which instantaneously (50 Hz) transforms distance information via sound and vibration such that the closer an object is to the user the stronger the vibration of the haptic actuator and the higher the frequency of the auditory cues. The EyeCane was adapted to include two narrow infra-red (IR) sensors, a sensing range of 1.5 m, such that one of the sensors was directed straight ahead while the other was directed approximately 42 degrees up (see Fig. 1A). Each of the sensors conveyed its output via a unique modality, audio or haptic.

The EyeCane used in this experiment was configured either as a stand-alone device or mounted on a white-cane. In the stand-alone condition, the haptic information was transferred to the user through a vibrating element embedded within the device. In the condition where the EyeCane was mounted on the white-cane (see Fig. 1B), the tactile output reached a wrist band through cables connected to the device; this was done to reduce confusion between the vibration of the EyeCane and that coming from the white-cane’s contact with the floor. In both conditions, stand-alone and mounted, the audio information reached the left ear via bone-conductance headphones.

2.2Participants

A total of 16 blind participants (9 female, age 40±10.05 years, 11 congenitally blind, 2 turned blind within a year/two from birth, 1 turned blind at the age of 25, and 2 turned blind at the age of 28) participated in this study. Half of the participants used the EyeCane as a stand-alone device (4 female, age 39±9 years, 6 congenital blind, 1 turned blind within a year of birth and 1 turned blind at the age of 25), and the other half used it mounted on the white-cane(5 female, age 42±11.4, 5 congenital blind, 1 turned blind at the age of 1 and two turned blind at the age of 28) (see Supplementary Table 1 for detailed information regarding participants and EyeCane setups). Participants were assigned to a sub-group according to their order of enrollment in the experiment. The first 8 participants used the EyeCane as a stand-alone device, while the last 8 participants used it mounted on the white-cane.

2.3Ethics

The experiment was approved by the ethics committee of the Hebrew University. All participants signed their informed consent.

To ensure participants’ safety, the stimuli were made of cardboard. As an additional precaution, participants were followed by a dedicated experimenter ensuring their safety. None of the participants fell or was harmed in any way during the experiment.

2.4Stimuli

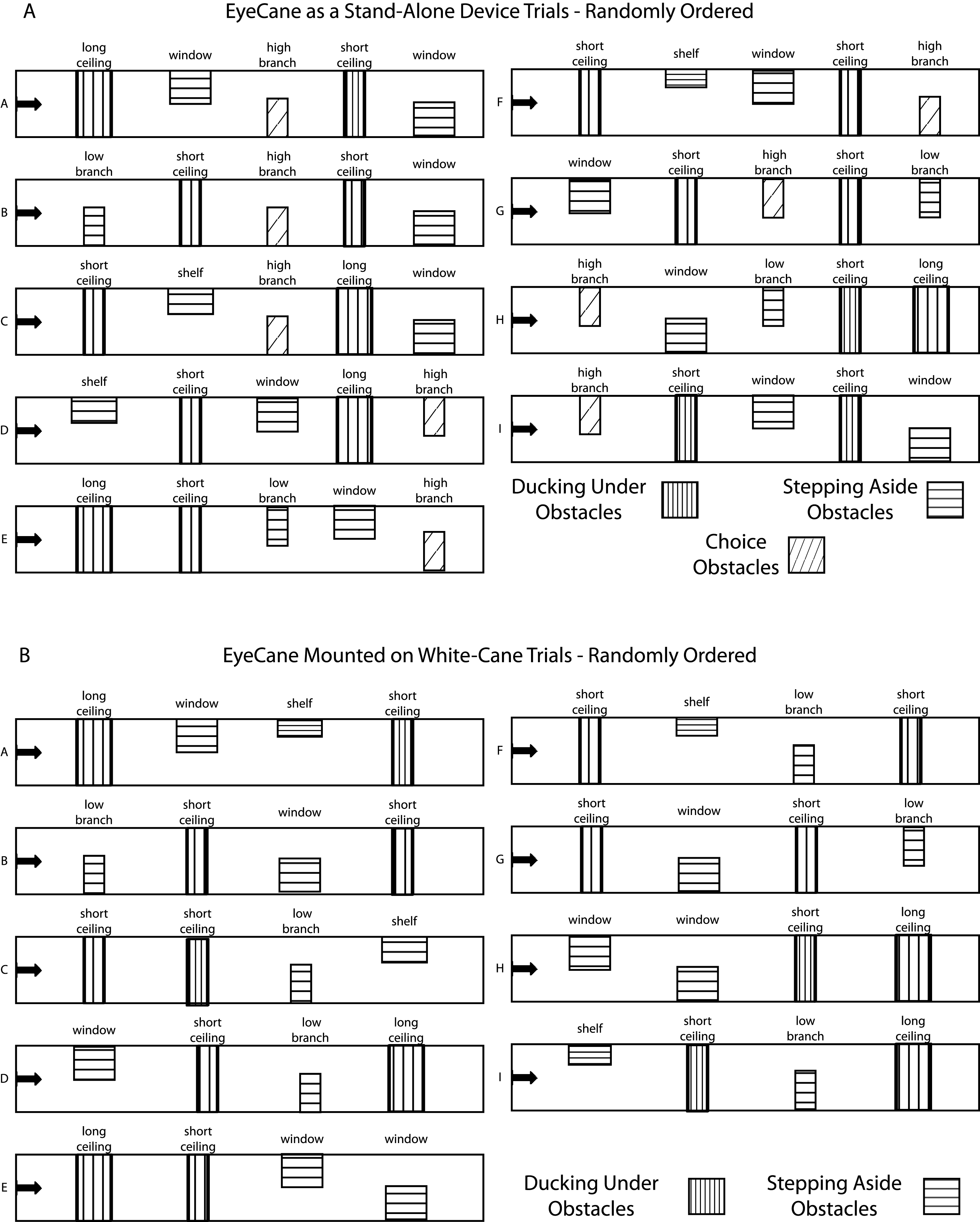

We designed safe cardboard obstacles simulating real-life waist-up obstructions potentially encountered daily. These obstacles were divided according to the avoidance method required to maneuver past them successfully and comfortably: ducking-under them and stepping around them.

Duck-under obstacles included obstructions in the height of the head and took up the entire width of the corridor; therefore the only way to avoid them successfully was to duck under them. This simulated two types of low ceilings: A short length ceiling and a longer length ceiling, the length of the ceiling determined the distance participants needed to duck while walking to pass the obstacle without collusion, i.e. the longer length ceiling required participants to duck for a longer distance.

Step-around obstacles simulated three types of daily-life obstructions waist-high and up: an open window, a shelf, and a low hanging branch, varying in their height from the ground and in their length. These obstructions which were hung from the left or right side of the corridor occupied only part of the corridors’ width, thereby enabling participants to bypass them by stepping aside and walking around them (See Fig. 2 for exact details).

For training, there were three types of obstacles; the first was a cardboard box which was hung from the ceiling at different heights in the middle of a wide corridor. The other obstacles simulated a window and a shelf.

2.5Procedure

The Experiment included three parts: training, test, and control.

2.5.1Training

The training first included a short verbal explanation regarding the functionality of the EyeCane. Then participants used the device, as a stand-alone device or when mounted on a white-cane, while encountering a cardboard box twice, hung at a different height for each trial according to which it was sometimes convenient to duck-under it, while always having the option of choosing to go around it. It also included encountering either a cardboard window or a cardboard shelf (chosen randomly), which were passed by walking around them, and experiencing the perception of a wall via the device.

The duration of the entire training was ∼5 minutes and took place in a wide corridor.

2.5.2Test

The test stage included 7 trials, in which participants were tasked with walking down a corridor while verbally detecting and physically avoiding Duck-under and Step-around obstacles with the aid of the EyeCane, mounted on a white-cane or as a stand-alone device. When the EyeCane was mounted on the white-cane participants used the white-cane in the normal fashion, thus the white-cane was not raised to touch the obstacles. In each trial, the participant encountered 4 obstacles, including 2 Duck-under obstacles and 2 Step-around obstacles. Participants were not told the number of obstacles. The obstacles varied in their location and type (see Fig. 2 for details about the different setups).

Note that the group using the EyeCane as a stand-alone device had 7 trials with 5 obstacles, while the additional obstacle was an obstacle which participants could pass either by ducking-under it or by stepping-around it. This fifth obstacle was eliminated from the trials of the group who used the EyeCane mounted on the white-cane. The group who used the EyeCane mounted on the white cane had 8 trials with 4 obstacles in each. This group had an additional trial to minimize the gap in the overall number of obstacles encountered by them in the experiment to that encountered by participants of the group using the EyeCane as a stand-alonedevice.

2.5.3Control

For control, participants performed an additional trial. The setup of this trial was in the same format of the test but was performed using only a White-Cane, the main tool typically available to them in everyday life. For the participants who used the EyeCane mounted on the white-cane for the test trials, the EyeCane stayed mounted on the white-cane for the control trial but was turned off. We will later refer to the control condition as ‘white-cane alone’.

This control test was performed by 15 of the 16 participants (see Supplementary Table 1 for exact details).

2.6Statistical analysis

All statistical analyses were conducted using a Wilcoxon Ranksum test, unless otherwise specified. All statistical results were corrected for multiple comparisons using the strict Bonferroni analysis and are presented after correction.

For most statistical analysis we treated both sub-groups using the EyeCane as a stand-alone device or mounted on a white-cane setups as a single group, besides when directly comparing them. For grouping the sub-groups of participants we eliminated from the analysis the additional 5th obstacle encountered in the trials of the EyeCane stand-alone group, and the additional trial from the group of the EyeCane mounted on the white-cane.

Successful detection was scored when participants verbally reported noticing obstacles, or when they took clear active steps to avoid them (moving away or ducking under) even if they neglected to report their detection. Successful avoidance was scored when participants detected the obstacles and then passed them without any contact (bodily and of the assistive device used) with any part of the obstacles.

3Results

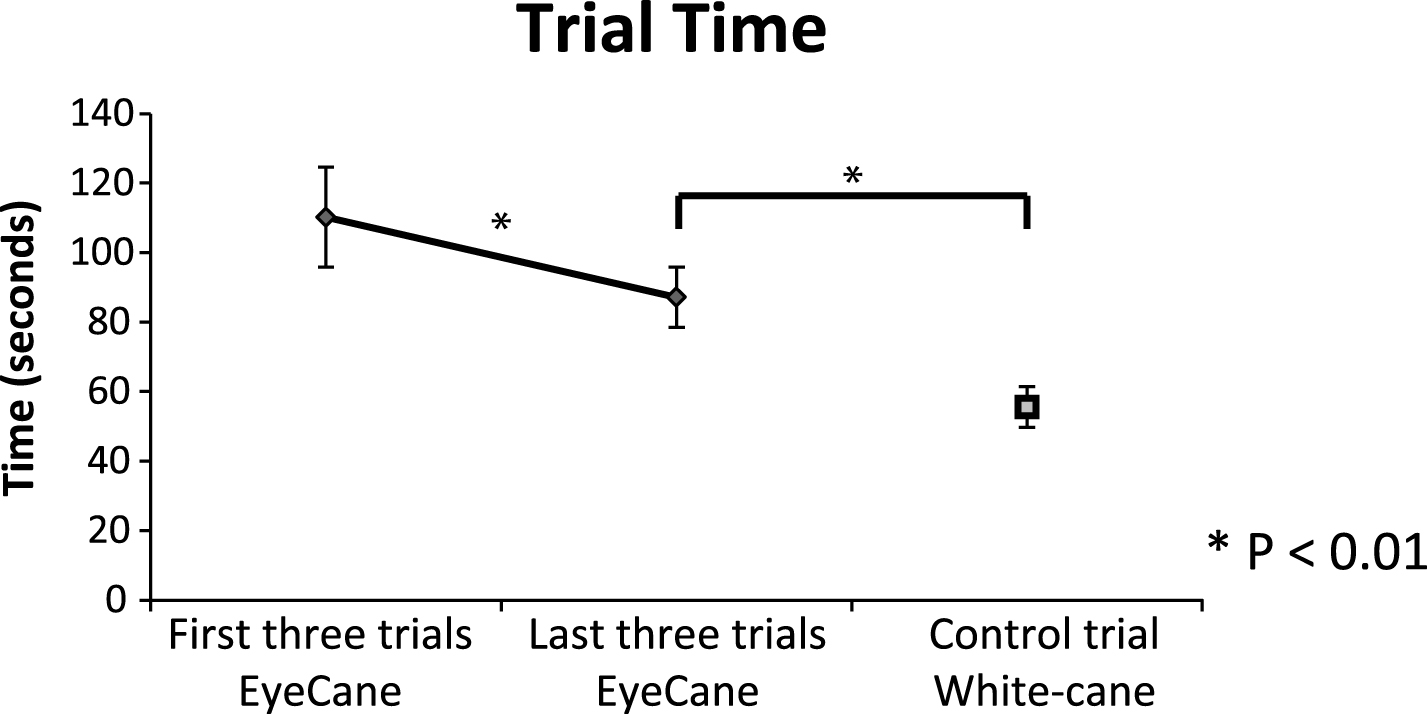

In terms of detection, participants successfully detected 95±6% (average±STD) of the Duck-under obstacles in the test trials while using the EyeCane, in a highly significant manner over the control while using the white-cane alone 13±30% (p < 0.0001) (see Fig. 3A). Their detection of Step-around obstacles while using the EyeCane was also significantly higher than their detection in the control trials when using the white-cane alone with 58±13% for detection of Step-around obstacles in EyeCane test trials compared to a control of 7±18% with the white-cane alone (p < 0.00006) (see Fig. 3A). The detection of Duck-under obstacles was significantly higher than the detection of Step-around obstacles (p < 0.005, Wilcoxon signed-rank).

In terms of avoidance, participants successfully avoided 36±25% of the Duck-under obstacles in the test trials using the EyeCane, in a highly significant manner over the control while using the white-cane alone 6±26% (p < 0.002) (see Fig. 3B). For obstacles that required the participant to step aside for passing them without collusion, participants successfully avoided 17±13% while using the EyeCane, this was significantly higher than when using the white-cane alone in the control 3±12% (p < 0.002) (see Fig. 3B). The avoidance of Duck-under obstacles was higher, but not significantly so, than the avoidance of Step-around obstacles (p = 0.39, Wilcoxon signed-rank).

There was a significant difference between the detection and avoidance of obstacles, both for the Duck-under (p < 0.01, Wilcoxon signed-rank) and Step-around obstacles (p < 0.01, Wilcoxon signed-rank) in the test trials.

There was no significant difference between the success rate of using the EyeCane as a stand-alone device or mounted on a white-cane (all p > 0.48).

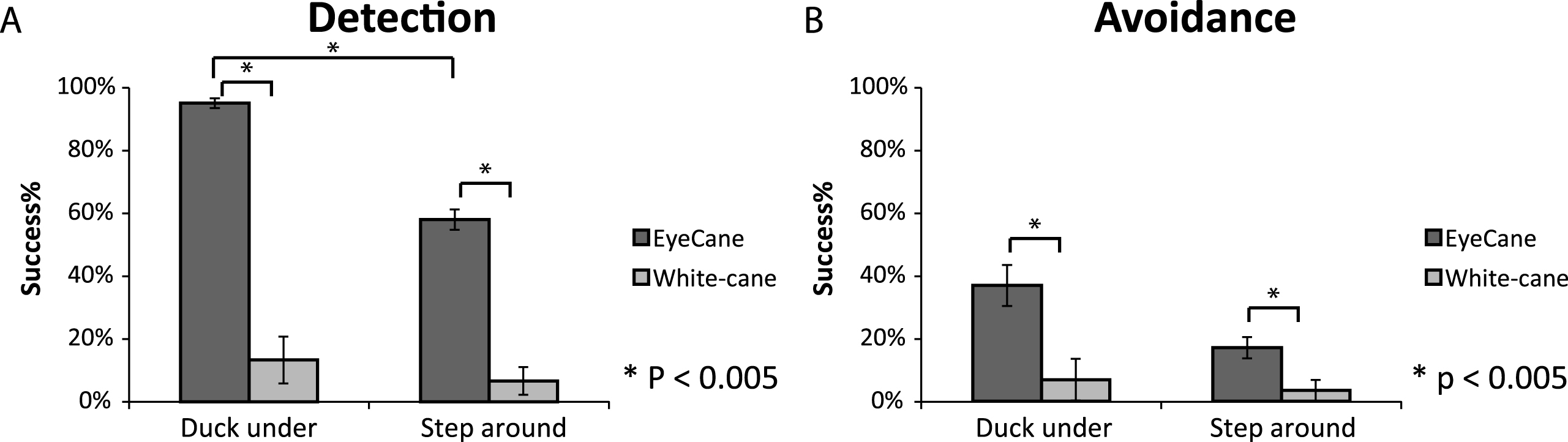

On average it took participants 100±41 seconds to walk down the corridor while bypassing the obstacles when using the EyeCane device (mounted or stand-alone). When walking down the corridor using the input from the white-cane alone it took participants 57±23 seconds to complete the task. This difference in walking speed was significant (p < 0.001). When walking with the EyeCane, in the first three trials it took participants 110±58 seconds to walk down the corridor. In the last three trials their walking speed was significantly quicker, and it took them 87±9 seconds to walk down the corridor (p < 0.03, Wilcoxon signed-rank).The walking speed in the last three trials, when walking with the EyeCane, was still significantly slower than in the control when walking with the white-cane alone (p < 0.009) (see Fig. 4). Due to technical issues, the walking time of one of the participants in one of the trials was not recorded.

4Discussion

Participants’ success rates in the detection and avoidance of waist-up obstacles using the EyeCane were significantly higher than in the control with the white-cane alone. As expected, the detection rate was significantly higher than the avoidance rate, as avoiding obstacles required participants to use the perceived information to plan and execute the proper motor response to avoid the obstacles after their detection via the EyeCane. These findings demonstrate that the EyeCane can indeed increase upper-body protection over the current common methods.

4.1Detection vs avoidance

Detection of obstacles can lead to their successful avoidance, enabling users to choose to avoid them by changing their walking path or by seeking help, but is also important in and of itself. It enables users to understand their surroundings, and even when an obstacle cannot be fully avoided and a collision occurs, the users’ preparation for it, both in terms of expectation and of walking speed will somewhat mitigate its effect. The knowledge of the existence of an obstacle, and of an expected collision with it, may reduce both the discomfort and embarrassment of such an incident.

After only ∼5 minutes of training on the task participants were highly successful in the detection of obstacles (95% for Duck-under obstacles, and 58% for Step-around obstacles). This high success rate for detection joins our previous studies and enhances the findings regarding the intuitiveness of the EyeCane device (Buchs et al., 2014). The avoidance success rate was significantly lower (36% for Duck-under obstacles, and 17% for Step-around obstacles). This gap between knowing that the obstacle was there and properly avoiding it may be due to the combination of the difficulty of understanding these new types of obstacles on the fly and integrating them into the user’s sensory-motor body scheme and calibrating properly the obstacles relative position and size.

This new ability likely requires further dedicated training, specifically for avoiding these obstacles, and can potentially increase users’ avoidance of these obstacles and also further improve their detection. Furthermore, our criteria for the determination of successful avoidance of obstacles were harsh, leading to trials coded as unsuccessful avoidance even if participants touched the obstacle gently. In daily use, blind individuals would typically treat such a gentle encounter as successfully avoiding the obstacle. This might also explain the higher performance in the duck-under in which the blind participants did not take any chances vs. the step-around in which they ‘allowed’ themselves such gentle encounters (see next paragraph).

4.2Duck-under obstacles vs Step-around obstacles

The detection of both Duck-under and Step-around obstacles while using the EyeCane was significantly higher than their detection in the control condition, with the white-cane alone. It should be noted that while users could “miss” Step-around obstacles by walking around them inadvertently, this was not possible for Duck-under obstacles which spanned the corridor. Thus, it is not surprising that the detection of Duck-under obstacles was significantly higher than that of the Step-around obstacles. This also led to the choice of considering successful obstacle avoidance only of obstacles that were detected, thus avoiding accidental avoidance of obstacles.

We see the EyeCane’s use as enriching the users understanding of their environment, e.g. enabling knowledge of objects, such as Step-around obstacles, in the pathway even though they could have been bypassed without any awareness of their existence – which can be important for example when looking for a landmark or learning an environment for future visits in which the user might take slightly different paths. As noted by one of the EyeCane users, T.M (40 years old, blind from age 28), after using the EyeCane in the real world reported that “At first, I thought the 5 m range would provide me with too much information, but I found that this range provides me with strategic information opposed to the tactical information obtained from the 1.5 m range.” This joins similar results from a previous study (Maidenbaum, Levy-Tzedek, et al., 2014) showing, using a virtual setup of the EyeCane, that using the EyeCane with a 5 meter sensor range facilitates navigation paths similar to those achieved via vision.

4.3Walking speed – EyeCane vs. white-cane

Participants walking speed was significantly slower when walking with the EyeCane in the test trials in comparison to when walking with the white-cane in the control trial. This finding goes in hand with previous studies which also found a trend in a decreased walking speed of user when using a new mobility device (Roentgen, Gelderblom, & de Witte, 2012). More generally, this difference in walking speed is expected, as users of any new device tend to be slower at first while mastering the new device and the information it offers.

The walking speed is expected to improve following more intense usage and familiarization with the new device, e.g. the EyeCane. The beginning of such an improvement in the user’s walking speed was demonstrated in our experiment, when participants walking speed improved significantly between the first three trails to the last three trials in the experiment while using the EyeCane.

4.4The EyeCane vs. other new mobility aids

Various other new mobility aids, such as the Sonic PathFinder (La Grow, 1999) or UltraCane (Penrod et al., 2005), use a wide-beam. These devices initially enable their users to detect all obstacles with relative ease, but passing them is harder as the wide beam will catch the obstacle/wall on the sides of the narrow obstacle free path and might therefore convey this free path as an obstacle. The use of a narrow-beam, as done here with the EyeCane, requires the user to actively scan his environment, a skill which needs to be mastered and improves over time (Buchs et al., 2014). However, it enables a more accurate perception of the environment and of finding open spaces between obstacles, while harnessing the perceptual advantages of active-sensing (Hein, Held, & Gower, 1970; Saig, Gordon, Assa, Arieli, & Ahissar, 2012). To the best of our knowledge, these other mobility aids have not been accepted for wide use by blind individuals. Amongst others, this is due to the long training duration required (Nau et al., 2013; Strumillo, 2003), ease of use (Calder, 2010), and their price (Dakopoulos & Bourbakis, 2010; Kim & Cho, 2013). We here demonstrated that the EyeCane can be successfully used also after very brief training (∼ 5 minutes), thus diminishing the need for intense training before assistive functional use in the real-world. Furthermore, the participants, and other blind individuals from various blind organizations who were introduced to the EyeCane have expressed their enthusiasm from the device’s functionality, size, and comfort of use. Additionally, the EyeCane was designed aiming at keeping its production prices low. These benefits of the EyeCane potentially overcome the main current obstacles holding back the adoption of these devices for real-life use. This, alongside the added protection obtained from using the device, potentially, will increase the use of the EyeCane and it will be adopted as an axillary device for blind and visually impaired individuals.

4.5EyeCane as a primary and secondary mobility aid

The success rates in both detection and avoidance of waist-up obstacles didn’t vary in a significant manner between using the EyeCane as a stand-alone device or when mounted on a white-cane. These findings suggest that the EyeCane can be used both as a primary and as a secondary mobility aid, thus providing upper-body protection while enabling each user to choose their personally preferred setup in general, and for specific tasks and situations. For instance, using the EyeCane mounted on the white-cane when walking outside, enables its users to detect tactile differences in ground surfaces (Øvstedal, Lindland, & Lid, 2005; A Ståhl, Almen, & Wemme, 2004; Agneta Ståhl, Newman, Dahlin-Ivanoff, Almén, & Iwarsson, 2010) and is reliable (Dakopoulos & Bourbakis, 2010), while providing additional upper-body protection. As H.B stated, after participating in the experiment; “when not focused while walking, I am vulnerable to bumps from various waist-up obstacles such as branches. The EyeCane can potentially reduce those incidents”.

On the other hand, using the EyeCane as a stand-alone device has advantages in and of itself, despite not offering ground-level protection. Using the EyeCane as a primary device can facilitate safe maneuvering in an unobtrusiveness manner in situations where users do not expect to encounter low-level obstacles, while waist-up obstacles are prevalent. For instance, it can be handy in an office environment, where waist-up obstacles such as shelfs are common, while ground obstacles are not expected. This advantage was further stressed by T.M, after using the EyeCane for approximately one month in his office: “The EyeCane is great for work; it enables me to move around un-intrusively and without creating noise.” Furthermore, using the EyeCane as a primary device can be useful for blind and visually impaired individuals who do not want to use the common mobility aids which are noticeable and classify them as blind people (Pavey, Dodgson, Douglas, & Clements, 2009). Using the EyeCane as a primary device by these individuals, who otherwise would walk without any protection or stay at home, has potentially a large impact on their independence also if the provided information is not complete. It is important though to note that some blind individuals find the use of a noticeable device helpful. This novel versatility of use (Roentgen, Gelderblom, Soede, & Witte, 2008), enhances the potential use of the EyeCane by visually impaired individuals for improved safe mobility.

4.6EyeCane paired with other visual rehabilitation methods

Beyond the use of the EyeCane as a secondary mobility aid, it can be coupled with invasive and non-invasive approaches to visual rehabilitation. For instance, the EyeCane can be used together with any invasive visual restoration treatment such as gene therapy and retinal implants (Ahuja et al., 2011; Chader, Weiland, & Humayun, 2009; Collignon, Champoux, Voss, & Lepore, 2011; Djilas et al., 2011; Humayun, Dorn, Cruz, Dagnelie, & Sahel, 2012; Wang et al., 2012; Zrenner et al., 2011). The EyeCane can provide users with a feeling of stability in their first steps using these interventions while moving, and provide protection in situations in which the information offered by them is not enough in itself. This conjunction potentially provides its users with the qualia of vision (Dowling, 2009) from these approaches without giving up on safety.

This is just as true for other non-invasive devices such as sensory substitution devices (SSDs), which translate information between senses, or for sensory augmentation navigation devices (Nagel, Carl, Kringe, Märtin, & König, 2005). Using the EyeCane’s distance information together with whole scene SSDs (Auvray, Hanneton, & O’Regan, 2007; Meijer, 1992), which specifically can convey visual information via audition or touch (indeed, by some definitions the EyeCane would be classified as a “minimalistic-sensory-substitution-device”) such as the EyeMusic (Abboud, Hanassy, Levy-Tzedek, Maidenbaum, & Amedi, 2014; Levy-Tzedek, Hanassy, Abboud, Maidenbaum, & Amedi, 2012), vOICe (Meijer, 1992) or BrainPort (Bach-y-Rita & W. Kercel, 2003) (see review on SSDs (Proulx et al., 2015)), will potentially enable users to integrate this information and further understand their environment. For example, both recognizing and understanding the distance to an object of interest, or understanding and recognizing obstacles as they are approached (see (Reiner, 2008) for additional potential benefits of such pairing). Furthermore, the EyeCane can potentially reveal points of interest users can zoom-in to with the EyeMusic’s zooming-in mechanism to perceive with higher resolution, thus better understanding their content (Buchs, Maidenbaum, Levy-Tzedek, & Amedi, 2015). This multisensory integration of approaches can be further implemented to a multimodal integrated visual rehabilitation setup (Maidenbaum, Abboud, & Amedi, 2014).

4.7Future work

Future work will include further development of the EyeCane to include a third sensor, directed to the ground, thus aiming at providing lower-body protection. Following the findings of this study, in which users successfully interpreted the information received from the two modalities to perceive information regarding obstacles waist-high and up, we believe that they will also be successful in interpreting extra information received via a third sensor, thus providing whole-length body protection. This work will also include an adjustment of the output of the EyeCane sensors such that they will convey distance information via haptics alone without audition. This change in output is useful both for the daily use of blind individuals who might prefer to use haptics alone and especially for deafblind individuals (Ask Larsen & Damen, 2014).

Another line of work is to design an optimal dedicated training program for the EyeCane. It is worthwhile to mention that when first introduced, the white cane requires hours of dedicated training with a special mobility trainer. It will be interesting to test whether perceptual learning mechanisms enable EyeCane users to perform even better or even avoid upper body obstacles completely.

Finally, future work should test the use of the EyeCane in the real world over longer and more significant periods. Specifically, this should include collecting users’ testimonies, investigating the EyeCanes’ efficiency in detection and avoidance of various real-life obstructions during everyday life, and exploring its acceptance by blind and visually impaired individuals.

Acknowledgments

The authors wish to thank E. Harow, T. Behor, Z. Jaron, U. Shemesh, A. Doron and R. Habusha for their help in running the experiment, and Itay Blumenzweig for his help in building the obstacles.

This work was supported by The European Research Council Grant (310809), a James S. McDonnel Foundation scholar award (no. 220020284), haubenstock stiftung, The Edmond and Lily Safra Center for Brain Sciences (ELSC), The Martier family foundation Grant.

Appendices

The supplementary material is available in the electronic version of this article: http://dx.doi.org/10.3233/RNN-160686.

References

1 | Abboud S. , Hanassy S. , Levy-Tzedek S. , Maidenbaum S. , & Amedi A. ((2014) ). EyeMusic: Introducing a “visual” colorful experience for the blind using auditory sensory substitution. Restorative Neurology and Neuroscience, 32: (2), 247–257. doi: 10.3233/RNN-130338 |

2 | Ahuja A.K. , Dorn J.D. , Caspi A. , McMahon M.J. , Dagnelie G. , Dacruz L. , ...& Greenberg R.J. ((2011) ). Blind subjects implanted with the Argus II retinal prosthesis are able to improve performance in a spatial-motor task. The British Journal of Ophthalmology, 95: , 539–543. doi: 10.1136/bjo.2010.179622 |

3 | Ask Larsen F. , & Damen S. ((2014) ). Definitions of deafblindness and congenital deafblindness. Research in Developmental Disabilities, 35: (10), 2568–2576. doi: http://dx.doi.org/10.1016/j.ridd.2014.05.029 |

4 | Auvray M. , Hanneton S. , & O’Regan J.K. ((2007) ). Learning to perceive with a visuo – auditory substitution system: Localisation and object recognition with “The vOICe.”. Perception, 36: (3), 416–430. doi: 10.1068/p5631 |

5 | Bach-y-Rita P. , W.Kercel S. , ((2003) ). Sensory substitution and the human–machine interface. Trends in Cognitive Sciences, 7: (12), 541–546. doi: 10.1016/j.tics.2003.10.013 |

6 | Buchs G. , Maidenbaum S. , & Amedi A. ((2014) ). Obstacle identification and avoidance using the “EyeCane”: A tactile sensory substitution device for blind individuals. In Auvray M. and Duriez C. (Eds.), Haptics: Neuroscience, Devices, Modeling, and Applications (pp. 96–103). Berlin Heidelberg, Springer. |

7 | Buchs G. , Maidenbaum S. , Levy-Tzedek S. , & Amedi A. ((2015) ). Integration and binding in rehabilitative sensory substitution: Increasing resolution using a new Zooming-in approach. Restorative Neurology and Neuroscience, 34: (1), 1–9. doi: 10.3233/RNN-150592 |

8 | Calder D.J. ((2010) ). Assistive technologies for the blind: A digital ecosystem perspective. In 3rd International Conference On Pervasive Technologies Related To Assistive Environments (PETRA). |

9 | Chader G.J. , Weiland J. , & Humayun M.S. ((2009) ). Artificial vision: Needs, functioning, and testing of a retinal electronic prosthesis. Progress in Brain Research, 175: , 317–332. |

10 | Collignon O. , Champoux F. , Voss P. , & Lepore F. ((2011) ). Sensory rehabilitation in the plastic brain. Progress in Brain Research, 191: , 211–231. doi: 10.1016/B978-0-444-53752-2.00003-5 |

11 | Dakopoulos D. , & Bourbakis N.G. ((2010) ). Wearable obstacle avoidance electronic travel aids for blind: A survey. IEEE Transactions on, 40: (1), 25–35. doi: 10.1109/TSMCC.2009.2021255 |

12 | Djilas M. , Olès C. , Lorach H. , Bendali a Dégardin J. , Dubus E. , ...& Picaud S. ((2011) ). Three-dimensional electrode arrays for retinal prostheses: Modeling, geometry optimization and experimental validation. Journal of Neural Engineering, 8: , 046020–doi: 10.1088/1741-2560/8/4/046020 |

13 | Dowling J. ((2009) ). Current and future prospects for optoelectronic retinal prostheses. Eye (London England), 23: (10), 1999–2005. doi: 10.1038/eye.2008.385 |

14 | Hein A. , Held R. , & Gower E.C. ((1970) ). Development and segmentation of visually controlled movement by selective exposure during rearing. Journal of Comparative and Physiological Psychology, 73: (2), 181–187. doi: 10.1037/h0030247 |

15 | Hersh M. , & Johnson M.A. ((2008) ). Assistive technology for visually impaired and blind people (p. 300). Springer Science & Business Media, London. |

16 | Hoover R.E. ((1950) ). The cane as a travel aid. Blindness, 353–365. |

17 | Humayun M.S. , Dorn J.D. , Cruz L. , Dagnelie G. , & Sahel J. ((2012) ). Interim results from the international trial of Second Sight’s visual prosthesis. Ophthalmology, 119: (4), 779–788. doi: 10.1016/j.ophtha.2011.09.028.Interim |

18 | Jacobson W.H. , & H., B. R. ((2010) ). Foundations of orientation and mobility. ( Wiener W.R. , Welsh R.L. , & Blasch B.B. , Eds.) (third., Vol. 1: , pp. 277–296). American Foundation for the Blind. |

19 | Kim S.Y. , & Cho K. ((2013) ). Usability and design guidelines of smart canes for users with visual impairments, 7: (1), 99–110. |

20 | Knol B.W. , Roozendaal C. , van den Bogaard L. , & Bouw J. ((1988) ). The suitability of dogs as guide dogs for the blind: Criteria and testing procedures. The Veterinary Quarterly, 10: (3), 198–204. doi: 10.1080/01652176.1988.9694171 |

21 | Küpfer R. ((1992) ). A guide dog in the life of the blind–experiences, insights, considerations. Die Rehabilitation, 31: (1), 1–10. |

22 | La Grow S. ((1999) ). The use of the Sonic Pathfinder as a secondary mobility aid for travel in business environments: A single-subject design. Journal of Rehabilitation Research and Development, 36: (4), 333–340. |

23 | Levy-Tzedek S. , Hanassy S. , Abboud S. , Maidenbaum S. , & Amedi A. ((2012) ). Fast, accurate reaching movements with a visual-to-auditory sensory substitution device. Restorative Neurology and Neuroscience, 30: (4), 313–323. doi: 10.3233/RNN-2012-110219 |

24 | Maidenbaum S. , Abboud S. , & Amedi A. ((2014) ). Sensory substitution: Closing the gap between basic research and widespread practical visual rehabilitation. Neuroscience & Biobehavioral Reviews, 41: , 3–15. |

25 | Maidenbaum S. , Hanassy S. , Abboud S. , Buchs G. , Chebat D.-R. , Levy-Tzedek S. , & Amedi A. ((2014) ). The “EyeCane”, a new electronic travel aid for the blind: Technology, behavior & swift learning. Restorative Neurology and Neuroscience, 32: (6), 813–824. |

26 | Maidenbaum S. , Levy-Tzedek S. , Chebat D.R. , Namer-Furstenberg R. , & Amedi A. ((2014) ). The effect of extended sensory range via the EyeCane sensory substitution device on the characteristics of visionless virtual navigation. Multisensory Research, 27: (5-6), 379–397. doi: 10.1163/22134808-00002463 |

27 | Maidenbaum S. , Levy-Tzedek S. , Chebat D.-R. , & Amedi A. ((2013) ). Increasing accessibility to the blind of virtual environments, using a virtual mobility aid based on the “EyeCane”: Feasibility study. PloS One, 8: (8), e72555. doi: 10.1371/journal.pone.0072555 |

28 | Manduchi R. ((2011) ). Mobility-related accidents experienced by people with visual impairment. Insight: Research & Practice in Visual Impairment & Blindness, 4: (2), 1–11. |

29 | Meijer P.B. ((1992) ). An experimental system for auditory image representations. IEEE Transactions on Bio-Medical Engineering. doi: 10.1109/10.121642 |

30 | Nagel S.K. , Carl C. , Kringe T. , Märtin R. , & König P. ((2005) ). Beyond sensory substitution—learning the sixth sense. Journal of Neural Engineering, 2: (4), R13. |

31 | Nasar J.L. , & Troyer D. ((2013) ). Pedestrian injuries due to mobile phone use in public places. Accident; Analysis and Prevention, 57: , 91–95. doi: 10.1016/j.aa2013.03.021 |

32 | Nau A. , Bach M. , & Fisher C. ((2013) ). Clinical tests of ultra-low vision used to evaluate rudimentary visual perceptions enabled by the brainport vision device. Translational Vision Science & Technology, 2: (3), 1. doi: 10.1167/tvst.2.3.1 |

33 | Øvstedal L.R. , Lindland T. , & Lid I.M. ((2005) ). On our way establishing national guidelines on tactile surface indicators. In International Congress Series(Vol. 1282: , pp. 1046–1050). Elsevier. |

34 | Pavey S. , Dodgson A. , Douglas G. , & Clements B. ((2009) ). Travel, Transport, and Mobility of people who are blind and partially sighted in the UK. Visual Impairment Centre for Teaching and Research, University of Birmingham, RNIB Report. |

35 | Penrod W. , Corbett M.D. , & Blasch B. ((2005) ). A master trainer class for professionals in teaching the ultracane electronic travel device. Journal of Visual Impairment & Blindness, 99: (11), 711–714. |

36 | Proulx M.J. , Gwinnutt J. , Dell’Erba S. , Levy-Tzedek S. , de Sousa , A. a , ((2015) ). Other ways of seeing: From behavior to neural mechanisms in the online “visual” control of action with sensory substitution. Restorative Neurology and Neuroscience, 34: (1), 29–44. doi: 10.3233/RNN-150541 |

37 | Reiner M. ((2008) ). Seeing through touch: The role of haptic information in visualization. In Gilbert J.K., Reiner M. & Nakhleh M. (Eds.), Visualization: Theory and practice in science education (pp.73–84). Netherlands, Springer. |

38 | Rodgers M.D. , & Emerson R.W. ((2005) ). Materials Testing in Long Cane Design: Sensitivity, Fiexibility, and Transmission of Vibration. Journal of Visual Impaimrent & Blindness, 99: (11), 696–706. |

39 | Roentgen U.R. , Gelderblom G.J. , & de Witte L.P. ((2012) ). User evaluation of two electronic mobility aids for persons who are visually impaired: A quasi-experimental study using a standardized mobility course. Assistive Technology, 24: (2), 110–120. |

40 | Roentgen U.R. , Gelderblom G.J. , Soede M. , & Witte L.P.De. ((2008) ). Inventory of electronic mobility aids for persons with visual impairments: A literature review. Journal of Visual Impairment & Blindness, 102: (11), 702–724. |

41 | Saig A. , Gordon G. , Assa E. , Arieli A. , & Ahissar E. ((2012) ). Motor-sensory confluence in tactile perception. The Journal of Neuroscience: The Official Journal of the Society for Neuroscience, 32: (40), 14022–14032. doi: 10.1523/JNEUROSCI.2432-12.2012 |

42 | Ståhl A. , Almen M. , & Wemme M. ((2004) ). Orientation using guidance surfaces: Blind tests of tactility in surfaces with different materials and structures. Publikation, (2004:158E). |

43 | Ståhl A. , Newman E. , Dahlin-Ivanoff S. , Almén M. , & Iwarsson S. ((2010) ). Detection of warning surfaces in pedestrian environments: The importance for blind people of kerbs, depth, and structure of tactile surfaces. Disability and Rehabilitation, 32: (6), 469–482. |

44 | Strumillo P. ((2003) ). NavBelt and the GuideCane. Robotics & Automation Magazine, IEEE, 10: (1), 9–20. doi: 10.1109/MRA.2003.1191706 |

45 | Wang L. , Mathieson K. , Kamins T.I. , Loudin J.D. , Galambos L. , Goetz G. , & Palanker D.V. ((2012) ). Photovoltaic retinal prosthesis: Implant fabrication and performance. Journal of Neural Engineering, 9: , 046014. doi: 10.1088/1741-2560/9/4/046014 |

46 | Zrenner E. , Bartz-Schmidt K.U. , Benav H. , Besch D. , Bruckmann A. , Gabel V.-P. , & Wilke R. ((2011) ). Subretinal electronic chips allow blind patients to read letters and combine them to words. Proceedings. Biological Sciences/The Royal Society, 278: (November 2010), 1489–1497. doi: 10.1098/rspb.2010.1747 |

Figures and Tables

Fig.1

A. An illustration explaining the two-directional EyeCane. In this figure, the information obtained via the sensor pointed up at an angle is transformed into audio signals, and the information acquired from the sensor pointed straight ahead is transformed into haptic cues. This configuration was reversed for some of the participants. B. The EyeCane mounted on a white-cane.

Fig.2

Trials setup, order was randomized between participants. A: Obstacle organization in the experimental part using the EyeCane as a stand-alone device. B: Obstacle organization in the experimental part using the EyeCane mounted on a white-cane.

Fig.3

A. Detection B. Avoidance (error bars represent the standard errors).

Fig.4

Trail time – the time it took to walk down the corridor while bypassing the obstacles using the EyeCane (first and last three trials) vs. the white-cane control (error bars represent the standard errors).