Detection of coronavirus disease from X-ray images using deep learning and transfer learning algorithms

Abstract

OBJECTIVE:

This study aims to employ the advantages of computer vision and medical image analysis to develop an automated model that has the clinical potential for early detection of novel coronavirus (COVID-19) infected disease.

METHOD:

This study applied transfer learning method to develop deep learning models for detecting COVID-19 disease. Three existing state-of-the-art deep learning models namely, Inception ResNetV2, InceptionNetV3 and NASNetLarge, were selected and fine-tuned to automatically detect and diagnose COVID-19 disease using chest X-ray images. A dataset involving 850 images with the confirmed COVID-19 disease, 500 images of community-acquired (non-COVID-19) pneumonia cases and 915 normal chest X-ray images was used in this study.

RESULTS:

Among the three models, InceptionNetV3 yielded the best performance with accuracy levels of 98.63% and 99.02% with and without using data augmentation in model training, respectively. All the performed networks tend to overfitting (with high training accuracy) when data augmentation is not used, this is due to the limited amount of image data used for training and validation.

CONCLUSION:

This study demonstrated that a deep transfer learning is feasible to detect COVID-19 disease automatically from chest X-ray by training the learning model with chest X-ray images mixed with COVID-19 patients, other pneumonia affected patients and people with healthy lungs, which may help doctors more effectively make their clinical decisions. The study also gives an insight to how transfer learning was used to automatically detect the COVID-19 disease. In future studies, as the amount of available dataset increases, different convolution neutral network models could be designed to achieve the goal more efficiently.

1Introduction

Coronavirus, known as COVID-19, is a rapidly spreading disease that can be treated but can also be fatal with a mortality rate of 2%. Due to extensive alveolar damage and progressive respiratory failure, this serious illness can lead to death [1]. Early detection and diagnosis of COVID-19 can be advantageous for societies to promptly quarantine patients, quickly intubate serious cases in specialized hospitals, and monitor the spread of the disease.

Despite the diagnostic test has been improved to become a quick process, its cost still represents a serious problem for governments as well as patients mostly for countries with paid healthcare services. Due to Covid-19 diagnoses and monitor health status for patients, the number of x-ray images has been increased. Such medical images can be considered as a valuable dataset for researchers to investigate and discover any potential patterns which can help in disease prediction and discovery [2].

The recent development of deep learning applications, which is a combination of machine learning approaches, supports automatically extract and classify features of images. One of its widely used applications is classifying medical images. Applying machine learning and deep learning have become among interesting application areas of artificial intelligence for research, analysis and pattern recognition. Recently, such improvements in these areas, as well as the growth in medical images and radiography datasets, augment new advantages to medical decision-making systems [3].

Different definitions were given to deep learning in the literature, but all have a common concept. One definition by [4] is a subfield of machine learning which is employed to extract and transform features as well as performing pattern analysis and classification, by processing non-linear information. Such learning is based on different levels of abstraction in hierarchal features. Each level is defined by the lower level, where information gets complicated.

There have been few Machine Learning research works available for effective detection of chest diseases in general rather than being performed specifically for COVID-19 detection. Jian-xin Yang, et al. [5] computed tomography detected 154 lung atelectasis consolidation in 324 lung regions in 81 patients with 80% accuracy. Similarly, D Gompelmann, et al. [6] provided “Chartis®” which is a Pulmonary Assessment System for the effective detection of atelectasis detection. Y Bar, et al. [7] employed deep learning for Chest pathology detection and achieved 87% accuracy in Cardiomegaly detection. Pietka et al. [8] proposed a method which can identify view-based radiographic images for the detection of chest related infections. Boone, et al. [9] used projection profiles features and applied a neural network approach to classify the radiographic images. Arimura et al. [10] employed a template matching technique to identify the chest related diseases. Lehmann et al. [11] exploited traditional K-nearest neighbor technique to work with the Hernia identification. Meanwhile, Kao et al. [12] utilized a two features-based methodology which can exploit body symmetry and background information for the classification of radiographic images. Similarly, Kao et al. [13] provided a technique to come up with a view-invariant chest infection identification methodology. While, Luo et al. [14] employed Bayes’s decision theory to identify Pneumonia. The features they used included the existence, shape, and spatial relation of medial axes of anatomic structures, as well as the average intensity of the region of interest.

We selected Inception-ResNet-V2, InceptionNet-V3 and NASNetlarge because they have been proved state-of-the-art models and have been used in previously when dealing with less data. Auxilliary classifiers do not contribute at the end of classification InceptionNet-V3 help improve the accuracy at the end and does help in improving the overall accuracy of the model. InceptionNet-V3 also contain features of V1 and V2 and helps working with scaled images [15]. Inception-ResNet-V2 has been used by Ferreira et al. in Classification of Breast Cancer Histology Images and got 90% accuracy, they have also shown better performance with the use of data augmentation [16]. Kornblith et al. also shown that pretrained image-net models perform better [17]. Hence, we are using pre-trained ImageNet models on Inception-ResNet-V2, InceptionNet-V3 and NASNetlarge and applying data augmentation to get better results.

This work tries to prove the following points:

• To perform the chest X-rays images analysis to detect different stages of COVID-19;

• Feature extraction and selection models are completely automated without manual obstacles;

• To develop state-of-the-art solution of the chest X-rays image and Pneumonia analysis by exploring the latest deep learning and computer vision methods and verifying the performance over special collected dataset;

• It will be proved that InceptioNetV3 performs best in terms of training and validation accuracies as compared to the other two models.

The results obtained are encouraging and show the effectiveness and accuracy of deep learning, or in our case, transfer learning with convolutional neural networks to automatically detect COVID-19 from normal and pneumonia infected X-ray radiographs from a small dataset. Despite the limited data availability, the COVID-19 research is still ongoing with possibilities of discovery of existent biomarkers in X-ray images which could be used for detection and diagnosis.

The rest of the paper is organized as follows: The proposed technique is explained with detailed elaboration in Section 32 followed by experimental evaluation and results with the discussion in Section 3. Section 4 concludes the paper and states the possible future directions.

2Methodology

This research is aimed at evaluating the effectiveness and accuracy of different convolutional neural networks models in the automatic diagnosis of COVID-19 from X-rays as compared to diagnosis performed by experts in the medical community. In order to create these models, a collection of 1765 X-ray radiographs were processed and used to train and validate the convolutional neural networks. Specifically, 850 X-ray scans of confirmed COVID-19 cases1 and 915 images of normal X-rays that have no disease and 500 images of pneumonia2. Hence, transfer learning is the preferred strategy to train the deep convolutional neural networks. The reason behind this is that other convolutional neural networks learning strategy requires large-scale datasets to perform accurate feature extraction and classification. Thus, with transfer learning, the retention of knowledge extracted from one task is the key to perform an alternative task. It focuses on storing knowledge gained while solving one problem and applying it to a different but related problem. At the end, data augmentation has been applied due to over-fitting nature of using less data. Data augmentation is the technique for increasing the data by scaling of existing images i.e. stretching and rotation etc.

2.1Transfer learning with CNNs

In most medical research works and studies, there is always a problem when it comes to the availability of data. Therefore, deep learning requires a large amount of data for training, otherwise the model will over-fit. Over-fitting occurs when a model captures noise of the data and it happens when the model fits the data too well due to the data being small. For this reason, transfer learning is used to train the models since there is a small amount of data. Transfer learning makes it possible for information gained by a pre-trained model on a large dataset to be transferred to another model with fewer datasets.

In the next phase, the convolution neural network is used to process new sets of images of a different nature and extract features from these images; this is done by referring to previous training because transfer learning enables us to transfer information. Pre-trained convolutional neural networks are used two ways, first, they are used for feature extraction which means after the data is analyzed and scanned. Knowledge of important features is kept and used by another model that is designed for classification. This method is mainly used because it is much cheaper to setup as compared to building a very deep network from scratch, or to retain useful features extractors trained in the initial stage. The second strategy is a more sophisticated procedure where specific modifications are made to pre-trained models so as to achieve optimal results, these modifications may include architecture adjustment and parameter tuning. This method only retains specific knowledge from the previous task so new trainable parameters are added to the network and these new parameters require training on large amounts of data to be useful.

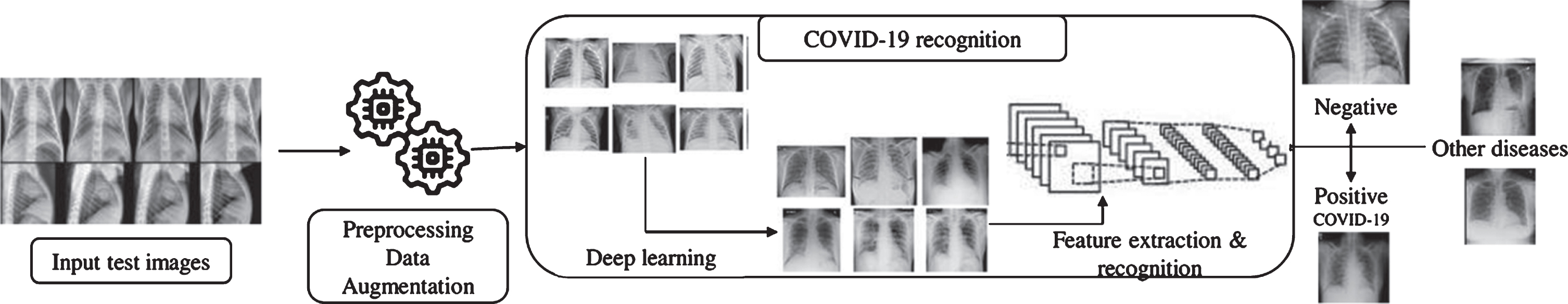

This research is aimed at detecting COVID-19 automatically from X-ray images and to achieve this, three deep convolutional neural networks were used, Reviewer 3 objection 6) InceptionNet-V3, Inception-ResNet-V2 and NASNetLarge models. Also, to overcome the challenge faced as a result of the limited amount of data available for the research, a transfer learning technique called ImageNet was used. Figure 1 shows pictorially the pipeline from taking in data to finally predicting whether not an X-ray is COVID-19 positive.

Fig. 1

Architecture diagram for proposed virus recognition phase. Different Deep conventional neural network architectures implemented to figure out the COVID-19.

2.2State-of-the-art CNNs for transfer learning

Despite the pandemic is still active and no treatment has been discovered so far, the detection of COVID-19 from medical images using deep convolutional neural networks CNNs has concerned researchers’ interest in the literature very recently. A study by [18] takes advantages of convolutional neural networks (CNN) for automatic diagnosis of COVID-19 from chest X-ray images. Because of the small size of COVID-19 sample, transfer learning was used for deep convolutional neural networks CNNs. Although the limitation of dataset size, the results showed effective automatic detection of COVID-19 related diseases. The created tool uses learning transfer approach to a set of medical images demonstrated diagnosis results similar to those of human specialists. Furthermore, the neural networks were able to diagnose heart diseases in children using chest x-ray images. Such application of this tool can eventually speed up diagnosis and improve early treatment which enhances healthcare services at the end.

Using deep learning approaches to extract and transform features proved its ability in COVID-19 diagnosis [19]. Early detection of COVID-19 is a high priority to control the spread by applying the appropriate quarantine. However, some healthcare systems suffer from the availability and quality of COVID-19 testing procedures especially in the affected areas. Therefore, using alternative diagnostic approaches become necessary to control the spread of the disease. Deep learning methods have been implemented [19] to extract features of COVID-19 CT images and improved disease diagnostics before testing for pathogens, which helps in disease control by early detection.

On another recent study by [20], a deep learning CNN design introduced to detect COVID-19 in chest radiography images, such as x-ray. The proposed design, COVID-Net, are used to predict Covid-19 cases which can improve clinical diagnosis and patients screening. The open source COVIDx dataset of the study adds advantages to the development of perfect and professional deep learning results for COVID-19 detection and consequently a prompt treatment.

It should be noted that COVID-19 is a new disease and there has not been much research done on it. We aim is to build a model to facilitate automatic detection of COVID-19 by using chest X-ray images to predict positive cases. In light of this, key contributions are expected as regards to image structure-based feature extraction and image structure analysis.

3Experiment of three models

For the purpose of this research, InceptioNetV3, Inception-ResNet-V2 and NASNetLarge models are designed and tasked with classifying X-ray images of COVID-19 into either positive or negative. Transfer learning is used due to the scarcity of data and each convolutional neural network has an input shape of 512x512x3. In the experiments, all the three models are trained both with and without data augmentation to observe the effects on each of the models. In data augmentation we have only used the rotation parameter which generate data by rotating the images in a range of 15° angle. Data was distributed as 80% for training and 20% for test.

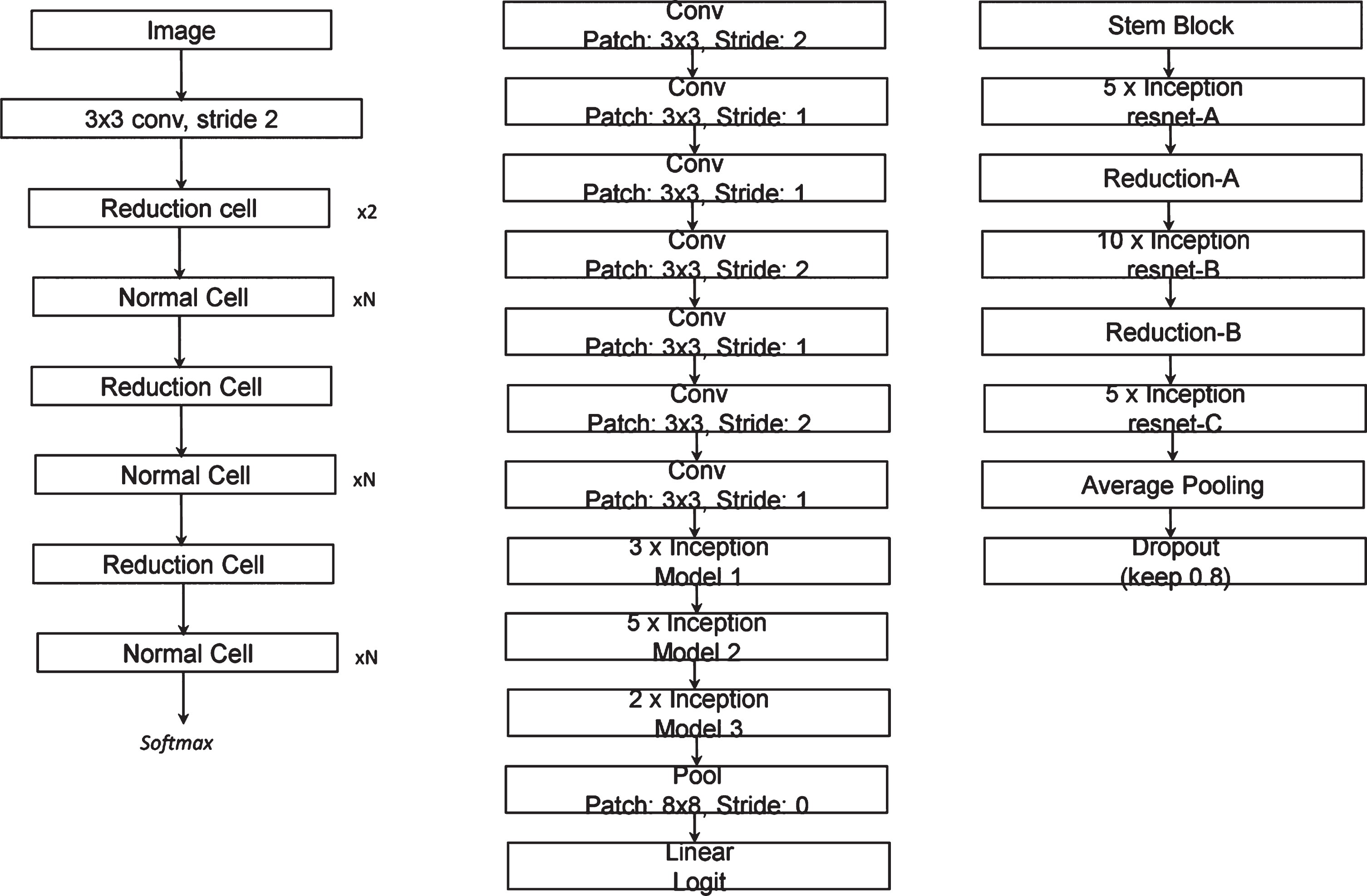

The models used for this study are all set to have an initial learning rate of 1e-3, and all they have a batch size of 16, activation function used are “Softmax” and “Relu” and were used according to the architecture of models which can be studied in their original paper [21–23], 10 epochs was set for each of the models. The number of epochs can be seen in the results and analysis section. The details of each model are depicted in Fig. 2 with some details as follows:

Fig. 2

Architecture of NASNetlarge(left), Inception Net V3(center), Inception RasNet V2(right).

3.1NASNetlarge

Neural Architecture Search (NAS) is employed when designing the NASetLarge, inception cells are also used to construct the layer of the model just like in the case of InceptionNet-V3. The two types of cells used for designing NASNet are normal cell and reduction cell and they are both presented pictorially below.

3.2InceptionNet-V3

The InceptionNet-V3 model has 48 deep layers and is pre-trained using ImageNet dataset; the head of this model is also replaced with a modified head that will be used for two class classification. Then to reduce the number of trainable parameters, we set the convolutional layers to ‘non-trainable’. By doing so, the number of trainable parameters is reduced from 22,982,626 to 1,179,842. The architecture of InceptionNet-V3 is designed purposely to have a deep structure even though it has significantly lesser number of training parameters as compared to other neural networks, for example, VGG16 has about 90 million parameters but when compared to InceptionNet-V3 it is found to have much less depth, and it is this depth that sharpens the accuracy of a model by allowing the model to capture finer details.

3.3Inception-ResNet-V2

The Inception-ResNetV2 is an advanced model as compared to InceptionNet-V3 because it has 162 deep network layers, but for our study, we modified the architecture of the model similar to the InceptionNet-V3 by replacing the head with the required dense and classification layers. From the name, it is obvious that the Inception-ResNetV2 combines the properties of both Inception and ResNet. The main property of ResNet is the residual variants always show instability and the last layer results to zero whenever a large number of filters are used, this problem continues even when the learning rate was changed or when we applied batch normalization to the layer, the best way to overcome this problem is to scale down the residuals before adding them to the previous activation layer.

4Results

Table 1 shows the comparison of all the accuracies of the different convolution neural network architectures used in our experiments, we can see that InceptioNetV3 and Inception-ResNet-V2 performs best in terms of training and test accuracies. NASNetLarge shows results different from all the other models, it has high training accuracy in case of augmentation than in case of non-augmentation but still it has high test accuracy when augmentation is applied. It is seen that for all the three architectures used in this research, the networks tend to over-fit when data augmentation is not used this can be seen from high training accuracy, this is due to the small amount data used for the training and this ultimately causes poor performance on validation sets. Test accuracy is also quite high due to the use of pre-trained model in case of use of non-augmented data, but not higher than models that use augmented data.

Table 1

A comparison of training and validation accuracies of InceptionNet-V3, Inception-ResNet-V2 and NASNetLarge

| InceptionNet-V3 | Inception-ResNet-V2 | NASNetLarge | ||||

| Data Augmentation | Yes | No | Yes | No | Yes | No |

| Training Accuracy | 98.63% | 99.02% | 98.64% | 99.02% | 99.54% | 98.28% |

| Test Accuracy | 97.87% | 94.12% | 97.87% | 94.12% | 96.24% | 94.87% |

Table 1 also shows a similar case of a huge difference between the training accuracy and validation accuracy due to over-fitting because data augmentation is not applied. This is happening as a result of limited amounts of data available for the training of the models. This could be solved because of using more data to train the model so the network generalizes and counters over-fitting. In terms of over-fitting, the InceptionNet-V3 performs best as compared to the other models used in the research.

From our previous discussion, it was mentioned that deeper networks yield better results, but from Table 1, we see that InceptionNet-V3 which has networks that are not as deep as Inception-ResNet-V2 and NASNetLarge performed better than them. This happens because the main reason why deeper network yielded better accuracy is as more and more data are fed to the network, the deeper layers do more of the feature extraction which is more effective than that of shallow networks. However, in our case the dataset it relatively medium so these deeper layers get little to no data hence they are virtually useless. Furthermore, since the shallow networks are already tuned to learning from small datasets, they perform better than the deeper networks. This means that as long as the data of COVID-19 is still limited, the best model to use is the InceptionNet-V3, but as the dataset gets bigger, the Inception-ResNetV2 and NASNetlarge will do a better job of classification.

5Discussion

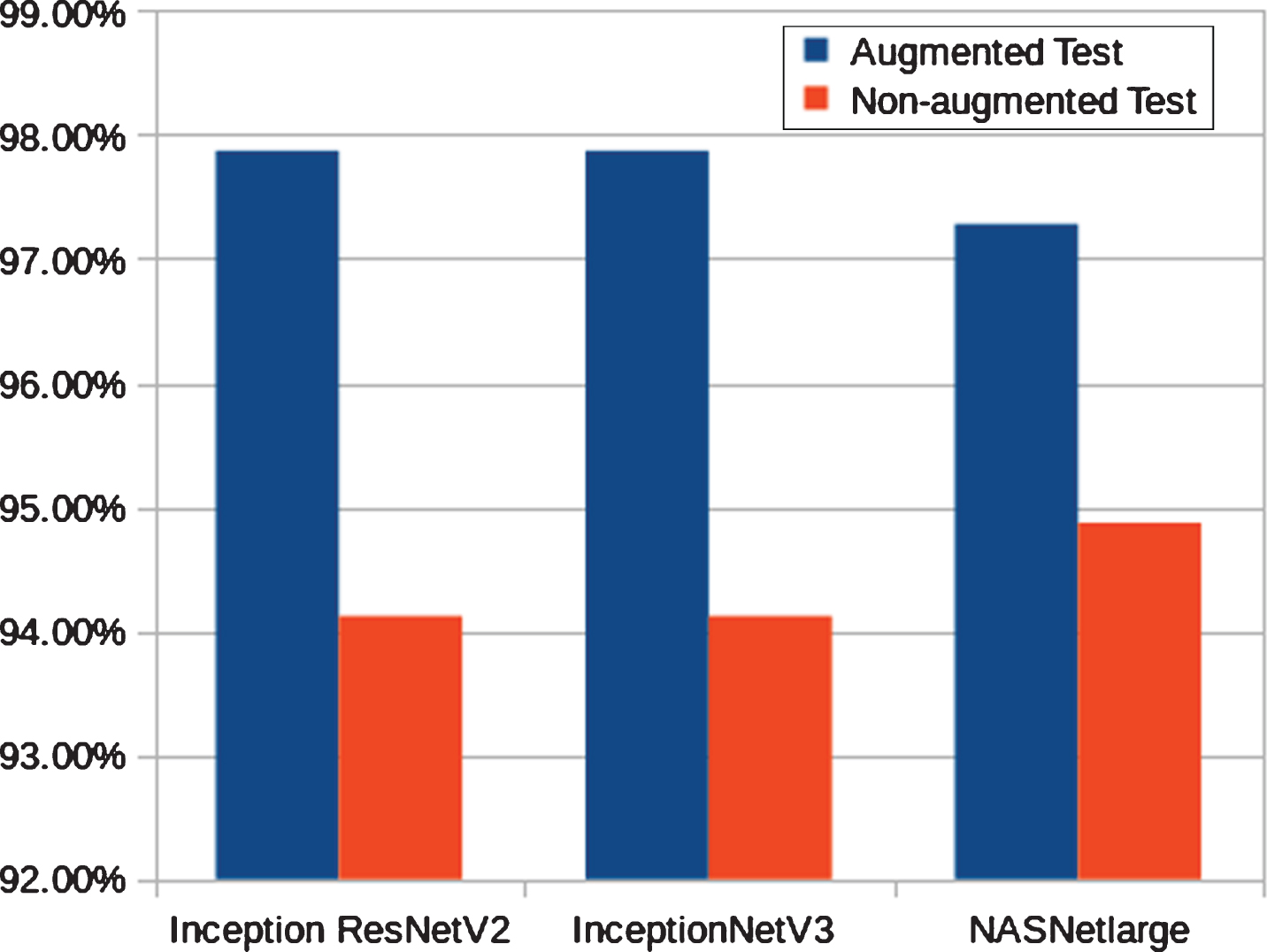

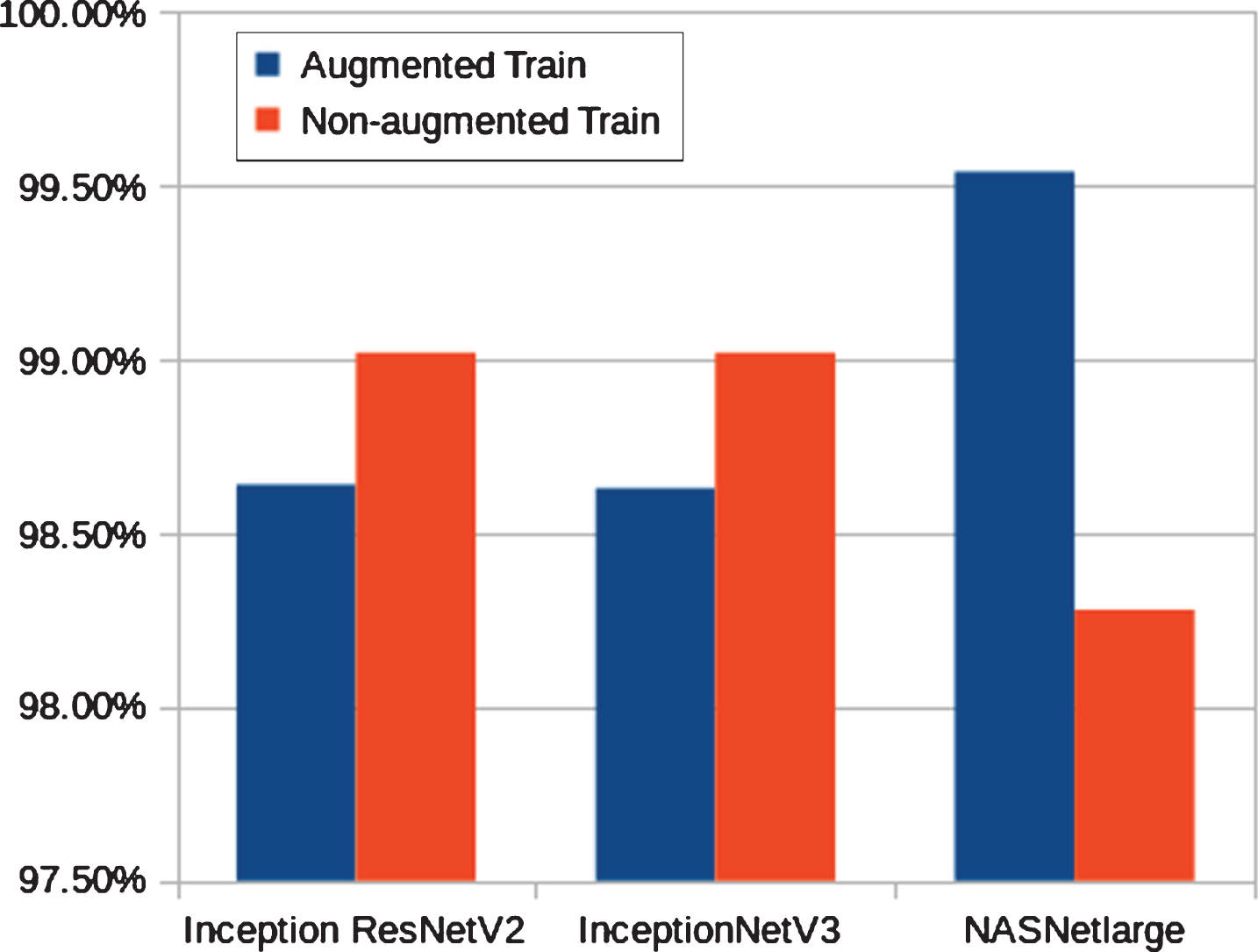

Early prediction of COVID-19 patients have been done in our experiments using chest X-ray images. Three deep learning models namely, InceptioNetV3, Inception-ResNetV2 and NASNetLarge have been trained and validated to accurately classify COVID-19 virus and normal test results. NASNetLarge and Inception-ResNetV2 models have similar performance but not as effective as the InceptioNetV3 model. Figs. 3 and 6 show the accuracy of 3 different convolutional neural networks architectures used in this study. As presented in Figs. 3 and 4, the models tend to over-fit when data augmentation is not performed. This over-fitting is happening as a result of limited data available for the research. By performing data augmentation, we introduce the sense of generalization in models and as a result, they yield better and more effective results are free of over-fitting. The training stage has been carried out up to different epochs to avoid over-fitting for all pre-trained models. It can be seen from Fig. 3 that the highest training accuracy is obtained with the InceptioNetV3 model when data augmentation is not performed. It is also seen that though the training accuracy of NASNetLarge is better than all of the architectures used in this experiment, however, NASNetLarge tends to overfit even when the data augmentation is used. It is noticeable that for all the three architectures used in this work, the networks tend to overfit when data augmentation is not used. This is due to the scarcity of data, the CNN architectures tend to overfitting data, thus performing poorly on validation sets.

Fig. 3

Training accuracy of InceptionNetV3, InceptionResNetV2 and, NASNetLarge on COVID-19 dataset with and without data augmentation.

Fig. 4

Test accuracy of InceptionNetV3, InceptionResNetV2 and, NASNetLarge on COVID-19 dataset with and without data augmentation.

Moreover, it is evident from the results that for each architecture, when data augmentation is not applied, there is a huge difference of accuracy between model training and validation. This huge difference points out that the model is overfitting. When analysis is performed to find out the reason for overfitting, we conclude that this overfitting is being caused due to the scarcity of data. Therefore, in order to avoid this, more data can be used to train the networks, thus allowing the networks to generalize and counter overfitting. In terms of overfitting, the InceptionNet-V3 (with data augmentation) performs the best as compared to other models used in the experiments.

As discussed, the depth of the network improves the classification accuracy. However, Table 1 presents that Inception-ResNet-V2 and NASNetLarge which are significantly deeper than InceptionNet-V3 perform a bit poorer than the later network. This is because the features of the small dataset can be easily learned by the shallow network. Thus, in deeper network, the later layers are not able to compute any crucial features that can assist them in improving the efficiency. In other words, due to small dataset, it does not matter that we are using very deep networks or not, because the latter layers in these very deep networks virtually do nothing. Therefore, our results show that InceptionNet-V3 is an optimal choice for this classification task. If more data is made available, there is a higher possibility that some deeper networks (i.e., Inception-ResNet-V2 and NASNetLarge) will perform better than others (i.e., InceptionNet-V3).

6Conclusions and future work

The early and effective prediction of COVID-19 in patients is vital to the prevention of the spread of the disease. In this study, we proposed a deep transfer learning models to detecting the COVID-19 automatically from chest X-ray by training it with X-ray images gotten from both COVID-19 patients and people with normal chest X-rays. Performance results show that the InceptionNet-V3 model yielded the highest accuracy of 97.87% (with data augmentation) and 94.12% (without data augmentation) among the three models designed. This study is aimed at helping doctors in making decisions in their clinical practice due to its high performance and effectiveness, the study also gives an insight to how transfer learning was used to automatically detect the COVID-19. In subsequent studies, as the amount of available dataset increases, different convolution neural network models could be designed to achieve the same goal.

The future of Artificial Intelligence in terms of COVID-19 is unlimited, as more and more data become available, training and testing of different deep learning models will be possible. Multi-classification techniques are required with huge datasets for effective, efficient and accurate detection of COVID-19.

Conflicts of interest

The authors declare that they have no conflicts of interest.

References

[1] | Xu Z. , Shi L. , Wang Y. , et al., Pathological findings of COVID-19 associated with acute respiratory distress syndrome, The Lancet Respiratory Medicine 8: (4) ((2020) ), 420–422. |

[2] | Lei P. , Huang Z. , Liu G. , et al., Clinical and computed tomographic (CT) images characteristics in the patients with COVID-19 infection: What should radiologists need to know?, Journal of X-ray Science and Technology 28: (3) ((2020) ), 369–381. |

[3] | Greenspan H. , Ginneken B.V. , Summers R.M. , Guest editorial deep learning in medical imaging: Overview and future promise of an exciting new technique, IEEE Transactions on Medical Imaging 35: (5) ((2016) ), 1153–1159. |

[4] | Deng L. , Yu D. , Deep learning: methods and applications, Foundations and Trends in Signal Processing 7: (3–4) ((2014) ), 197–387. |

[5] | Yang J.X. , Zhang M. , Liu Z.H. , et al., Detection of lung atelectasis/consolidation by ultrasound in multiple trauma patients with mechanical ventilation, Critical Ultrasound Journal 1: (1) ((2009) ), 13–16. |

[6] | Gompelmann D. , Eberhardt R. , Slebos D.J. , et al., Comparison betweenChartis pulmonary assessment system detection of collateral ventilation vs. corelab CT fissure analysis in predicting atelectasis in emphysema patients treated with endobronchial valves (abstract), ERS Annual Congress, Amsterdam, (2011), P3536. |

[7] | Bar Y. , Diamant I. , Wolf L. , et al., Chest pathology detection using deep learning with non-medical training, 2015 IEEE 12th International Symposium on Biomedical Imaging (ISBI). ((2015) ). |

[8] | Pietka E. , Huang H.K. , Orientation correction for chest images, Journal of Digital Imaging 5: (3) ((1992) ), 185–189. |

[9] | Boone J.M. , Seshagiri S. , Steiner R.M. , Recognition of chest radiograph orientation for picture archiving and communications systems display using neural networks, Journal of Digital Imaging 5: (3) ((1992) ), 190–193. |

[10] | Arimura H. , Katsuragawa S. , Li Q. , et al., Development of a computerized method for identifying the posteroanterior and lateral views of chest radiographs by use of a template matching technique, Medical Physics 29: (7) ((2002) ), 1556–1561. |

[11] | Lehmann T.M. , Guld O. , Keysers D. , et al., Determining the view of chest radiographs, Journal of Digital Imaging 16: (3) ((2003) ), 280–291. |

[12] | Kao E.F. , Lee C. , Jaw T.S. , et al., Projection profile analysis for identifying different views of chest radiographs, Academic Radiology 13: (4) ((2006) ), 518–525. |

[13] | Kao E.F. , Lin W.C. , Hsu J.S. , et al., A computerized method for automated identification of erect posteroanterior and supine anteroposterior chest radiographs, Physics in Medicine and Biology 56: (24) ((2011) ), 7737–7753. |

[14] | Luo H. , Hao W. , Foos D. , Cornelius C. , Automatic image hanging protocol for chest radiographs in PACS, IEEE Transactions on Information Technology in Biomedicine 10: (2) ((2006) ), 302–311. |

[15] | Albahli S. , Efficient GAN-based Chest Radiographs (CXR) augmentation to diagnose coronavirus disease pneumonia, International Journal of Medical Sciences 17: (10) ((2020) ), 1439–48. |

[16] | Szegedy C. , Vanhoucke V. , Joffe S. , et al., Rethinking the inception architecture for computer vision, Proceeding of 2016 IEEE Conference on Computer Vision and Pattern Recognition ((2016) ), 2818–2826. |

[17] | Ferreira C.A. , Melo1 T. , Sousa P. , et al., Classification of breast cancer histology images through transfer learning using a pre-trained Inception Resnet V2, Proceeding of International Conference Image Analysis and Recognition ((2018) ), 763–770. |

[18] | Kornblith S. , Shlens J. , Le Q.V. , Do Better ImageNet Models Transfer Better? Proceeding of the IEEE conference on computer vision and pattern recognition ((2019) ), 2661–2671. |

[19] | Kermany D.S. , Goldbaum M. , Cai W. , et al., Identifying medical diagnoses and treatable diseases by image-based deep learning, Cell 172: (5) ((2018) ), 1122–1131. |

[20] | Wang S. , Kang B. , Ma J. , et al., A deep learning algorithm using CT images to screen for Corona Virus Disease (COVID-19), MedRxiv. (2020) DOI:10.1101/2020.02.14.20023028 |

[21] | Zoph B. , Vasudevan V. , Shlens J. , Le Q.V. , Learning transferable architectures for scalable image recognition, Proceeding of the IEEE conference on computer vision and pattern recognition ((2018) ), 8697–8710. |

[22] | Szegedy C. , Ioffe S. , Vanhoucke V. , Inception-v4, Inception-ResNet and the Impact of Residual Connections on Learning, Proceeding of Thirty-first AAAI conference on artificial intelligence ((2017) ), 4278–4284. |

[23] | Nguyen L.D. , Lin D. , Lin Z. and Cao J. , Deep CNNs formicroscopic image classification by exploiting transfer learning and feature concatenation, 2018 IEEE International Symposium on Circuits and Systems (ISCAS) ((2018) ), 1–5. |