The Internet-of-Things: Reflections on the past, present and future from a user-centered and smart environment perspective

Abstract

This paper introduces the Internet-of-Things (IoT) and describes its evolution from a concept proposed by Kevin Ashton in 1999 through its public emergence in 2005 in a United Nations ITU report entitled “The Internet of Things”, to the present day where IoT devices are available as off-the-shelf products from major manufacturers. Using a systematic study of public literature, the paper presents a five-phase categorisation of the development of the Internet-of-Things from its beginnings to the present day. Four mini case studies are included to illustrate some of the issues involved. Finally, the paper discusses some of the big issues facing future developers and marketers of Internet-of-Things based products ranging from artificial intelligence (AI) through to customer privacy and acceptance finishing with an optimistic assessment of the future of the Internet-of-Things.

1.Introduction

We are approaching 20 years since Kevin Ashton coined the term Internet-of-Things (IoT) as part of a 1999 presentation to Proctor & Gamble about incorporating RFID tags within their supply chain to “empower computers with their own means of gathering information, so they can see, hear and smell the world for themselves, in all its random glory”. It built on earlier ideas, most notably Mark Weiser’s vision for ubiquitous computing described in his 1991 article for Scientific American (The Computer for the 21st Century) in which he described a future world composed of numerous interconnected computers that were designed to “weave themselves into the fabric of everyday life until they are indistinguishable from it” [98]. Elsewhere, in the late 90’s researchers working in Artificial Intelligence (AI) had envisioned the concept of ‘embedded-agents’ whereby AI processes could be made computationally small enough to be integrated into the type of ubiquitous computing and Internet-of-Things devices that Weiser and Ashton had described, opening the possibility for so-called Intelligent Environments or Ambient Intelligence. In these environments the intelligence was distributed to devices making them smart, robust and scalable. The most noteworthy movements were Intelligent Environments, which arose in Europe driven by researchers such as Juan Carlos Augusto of the University of Middlesex (one of the co-founders of the JAISE journal) and Victor Callaghan (one of the co-founders of the International Intelligent Environments Conference series) of the Essex University [21] and ambient intelligence which was originally proposed by the late Eli Zelkha of Palo Alto Ventures in the USA [39]. All these researchers were visionaries, able to imagine a future that had yet to exist, but which they described in such credible terms as to motivate a generation of researchers to work towards bringing these visions to reality, adding numerous innovation of their own as they completed their work. Industry was quick to recognise the potential for these technologies to radically disrupt the market by offering customers services and products that had hitherto not existed, and the consequent challenges of how shape the enormous possibilities into viable products which customers would want and buy. Many innovation strategies were deployed to explore this space with one of the most notable, Science Fiction Prototyping, arising within Intel being championed by their then futurist, Brian David Johnson. Science Fiction Prototyping functioned by enabling company personnel and customers’ to work together on future product ideas via writing and modifying narrative fiction which incorporated customers needs and IoT capabilities into imaginative but credible scenario [20]. As we approach the 20th anniversary of Ashton’s Internet-of-Things vision it seems timely to create a chapter that reflects on the various threads of progress during the past 20 years and ponders on some of the issues that might affect future development. Thus, in this chapter we review the history of the IoT, discuss the main technical frameworks and application areas, discuss topical issues (e.g. AI and privacy) and delve into the process of market acceptance of new technology before concluding with a speculative discussion on the future of IoT.

2.Evolution of the Internet-of-Things

Advances in semiconductor and miniaturisation technologies have led to a remarkable reduction in the size of computers bringing pervasiveness into mainstream computing. Today, an ever increasing number of everyday objects are endowed with sensing technologies, which are seamlessly connected to other devices, via the Internet, to send data, respond to inputs, or act autonomously, delivering diverse services in real time. This interconnection of everyday objects, or smart “things”, is potentially amongst the most significant disruptive technologies of the 21st century. According to a report by Cambridge Consultants (Fig. 1), there were approximately 13.3 million IoT connections in the UK in 2016, and it is expected to grow at a compound annual growth rate (CAGR) of approximately 36% to 155.7 million connections at the end of 2024. In addition, according to market research reports the IoT market is experiencing significant growth with ABI Research [3,57] predicting a CAGR of 44.9% in shipments for digital household appliances between 2011–2020 (Table 1). Furthermore, a BCC Research report11 projected that the IoT hardware segment is expected to grow from $6.5 billion in 2017 to $17.3 billion in 2022 at a CAGR of 21.7% for this period, while the service segment is projected to grow from $6.5 billion to $17.3 billion at a CAGR of 21.7% for the same period. The projection shows the potential impact of the Internet-of-Things on the market sector as a whole.

Before proceeding it would be helpful to clarify more exactly what is meant by the phrase “The Internet-of-Things”. For example, depending on the context of usage, it might be seen as being about (physical) hardware and objects or the Internet, or networks, or the actual communication. Alternatively, it may imply that it is about sensing, processing, or the capability of making decisions. At a different level, might be seen as concerning data, or information. From a different perspective, one might even describe it as a new processing model that leads to improving the efficiency of a certain business operations or enhancing the quality of people’s lives. There have been many interpretations of the concept, yet there is still not a universal definition that all experts agree on. Finally, how do the Internet-of-Things differ from similar movements such as pervasive computing, ambient intelligence, ubiquitous computing and intelligent environments? Thus, the definition of the Internet-of-Things will be discussed in the following section.

2.1.The Internet-of-Things as a multi-faceted movement

The Internet-of-Things, the Embedded-Internet, Ubiquitous Computing, Pervasive Computing, and Ambient Intelligence are terms which, in the eyes of many ordinary people, seem to describe the same thing. However, in academic circles the nuances in the perceived meanings can be important and sometimes argued over. From the authors review of the literature these sometime subtle differences can be better understood by tracing the roots of each community. For example, the Pervasive Computer community have historically had a strong interest in communications and networking issues while the Ubiquitous Computing community have had a greater interest in HCI issues. Likewise the Ambient Intelligence and Intelligent Environments community have, as their names imply, a keen interest in the use of AI. The Internet-of-Things grew out of sensor networks and monitoring which, developed quickly into a broader interest for networked devices and infrastructures. Networking and infrastructure aspects of IoT are covered in depth in another chapter of this edited book by Gomeza et-al. [51]. Of course all communities cover all aspects of such systems, so it’s hardly surprising that, to the ordinary people, these terms seem to be synonymous with each other (and increasingly so, as the market introduces products that combine all these ideas). Given that the terminology of the Internet-of-Things arose from industry, and industry is bringing these technologies to the market, it’s hardly surprising that the Internet-of-Things is now the dominant term in the public arena. That being the case we now trace the history of the term, the Internet-of-Things.

The starting point for the term “Internet-of-Things” finding popular recognition in the public domain can be traced back to the 2005 World Summit in Tunis where the International Telecommunication Union (ITU), a body of the United Nations (UN), published a report entitled “The Internet of Things” [1]. It would seem that this was a pivotal moment in both publicising the term and creating an awareness of the enormous business opportunities arising from the connection of embedded computers, along with sensors and/or actuators, to the Internet. These embedded computers (things, in IoT terminology) can be made to function autonomously, with or without human intervention, communicating with other devices or people, via the Internet. With the addition of AI the ‘things’ can become smart, using pre-programmed rules or those learnt dynamically through machine-learning to make decisions. The sensors embedded into IoT devices can produce big-data for higher level analytical engines. The 2005 ITU report [1] described this concept in great detail together with the potential benefit that the technology could bring to industry and society. The report highlighted three important initial functions: tracking, sensing, and decision-making being the fundamental part of future Internet-of-Things eco-systems. Of course, this report was written over 10 years ago and since then, technology and ideas have advanced, creating bigger visions and possibilities, some of which we will touch on later in this paper.

Table 2

The IoT definitions

| Year | Body | Definition |

| 2005 | ITU [1] | “A global infrastructure for the information society, enabling advanced services by interconnecting (physical and virtual) things based on existing and evolving interoperable information and communication technologies.” |

| “ubiquitous network” and “Available anywhere, anytime, by anything and anyone.” | ||

| 2008 | ETA EPoSS – The European Technology Platform on Smart Systems Integration [58] | “the network formed by things/objects having identities, virtual personalities operating in smart spaces using intelligent interfaces to connect and communicate with the users, social and environmental contexts”. “Things having identities and virtual personalities operating in smart spaces using intelligent interfaces to connect and communicate within social, environmental and user contexts.” “The semantic origin of the expression is composed by two words and concepts: ‘Internet’ and ‘Thing,’ where ‘Internet’ can be defined as ‘the worldwide network of interconnected computer networks, based on a standard communication protocol, the Internet suite (TCP/IP),’ while ‘Thing’ is ‘an object not precisely identifiable.’ Therefore, semantically, ‘Internet of Things’ means ‘a worldwide network of interconnected objects uniquely addressable, based on standard communication protocols.”’ |

| Berkeley University | “... integrations of computation, networking and physical processes. Embedded computers and networks monitor and control the physical processes, with feedback loops where physical processes affect computations and vice versa.” | |

| The Software Fabric for the Internet of Things [79] | “The notion of an ‘Internet of Things’ refers to the possibility of endowing everyday objects with the ability to identify themselves, communicate with other objects, and possibly compute.” | |

| 2009 | CASAGRAS [58] | “A global network infrastructure, linking physical and virtual objects through the exploitation of data capture and communication capabilities. This infrastructure includes existing and evolving Internet and network developments. It will offer specific object identification, sensor and connection capability as the basis for the development of independent cooperative services and applications. These will be characterized by a high degree of autonomous data capture, event transfer, network connectivity and interoperability.” |

| SAP [58] | “A world where physical objects are seamlessly integrated into the information network, and where the physical objects can become active participants in business processes. Services are available to interact with these ‘smart objects’ over the Internet, query and change their state and any information associated with them, taking into account security and privacy issues.” | |

| Kevin Ashton, from Proctor & Gamble, then at MIT [47] | “Nearly all of the data available on the Internet were first captured and created by human beings – by typing, pressing a record button, taking a digital picture or scanning a bar code. The problem is, people have limited time, attention and accuracy – all of which means they are not very good at capturing data about things in the real world. If we had computers that knew everything there was to know about things – using data they gathered without any help from us – we would be able to track and count everything, and greatly reduce waste, loss and cost. We would know when things needed replacing, repairing or recalling, and whether they were fresh or past their best. The Internet of Things has the potential to change the world, just as the Internet did. Maybe even more so.” | |

| 2010 | IETF – The Internet Engineering Task Forc [58] | “The basic idea is that IoT will connect objects around us (electronic, electrical, non electrical) to provide seamless communication and contextual services provided by them. Development of RFID tags, sensors, actuators, mobile phones make it possible to materialize IoT which interact and co-operate each other to make the service better and accessible anytime, from anywhere.” |

| CERP-IoT – The Cluster of European Research Projects on the Internet of Things [58] | “Internet of Things (IoT) is an integrated part of Future Internet and could be defined as a dynamic global network infrastructure with self configuring capabilities based on standard and interoperable communication protocols where physical and virtual “things” have identities, physical attributes, and virtual personalities and use intelligent interfaces, and are seamlessly integrated into the information network. In the IoT, ‘things’ are expected to become active participants in business, information and social processes where they are enabled to interact and communicate among themselves and with the environment by exchanging data and information ‘sensed’ about the environment, while reacting autonomously to the ‘real/physical world’ events and influencing it by running processes that trigger actions and create services with or without direct human intervention. Interfaces in the form of services facilitate interactions with these ‘smart things’ over the Internet, query and change their state and any information associated with them, taking into account security and privacy issues.” | |

| From the Internet of Computers to the Internet of Things [48] | “The Internet of Things represents a vision in which the Internet extends into the real world embracing everyday objects. Physical items are no longer disconnected from the virtual world, but can be controlled remotely and can act as physical access points to Internet services. An Internet of Things makes computing truly ubiquitous.” |

Table 2

(Continued)

| Year | Body | Definition |

| Future Internet (Society for Brain Integrity, Sweden, 2010) [58] | “It means that any physical thing can become a computer that is connected to the Internet and to other things. IoT is formed by numerous different connections between PCs, human to human, human to thing and between things. This creates a self configuring network that is much more complex and dynamic than the conventional Internet. Data about things is collected and processed with very small computers (mostly RFID tags) that are connected to more powerful computers through networks. Sensor technologies are used to detect changes in the physical environment of things, which further benefits data collection.” | |

| The Internet of Things: Networked objects and smart devices [58] | “The Internet of Things comprises a digital overlay of information over the physical world. Objects and locations become part of the Internet of Things in two ways. Information may become associated with a specific location using GPS coordinates or a street address. Alternatively, embedding sensors and transmitters into objects enables them to be addressed by Internet protocols, and to sense and react to their environments, as well as communicate with users or with other objects.” | |

| The Internet of Things [35] | “The physical world itself is becoming a type of information system. In what’s called the Internet of Things, sensors and actuators embedded in physical objects – from roadways to pacemakers – are linked through wired and wireless networks, often using the same Internet Protocol (IP) that connects the Internet. These networks churn out huge volumes of data that flow to computers for analysis. When objects can both sense the environment and communicate, they become tools for understanding complexity and responding to it swiftly. What’s revolutionary in all this is that these physical information systems are now beginning to be deployed, and some of them even work largely without human intervention.” | |

| The Internet of Things: 20th Tyrrhenian Workshop on Digital Communications [90] | “The expression ‘Internet of Things’ is wider than a single concept or technology. It is rather a new paradigm that involves a wide set of technologies, applications and visions. Also, complete agreement on the definition is missing as it changes with relation to the point of view. It can focus on the virtual identity of the smart objects and their capabilities to interact intelligently with other objects, humans and environments or on the seamless integration between different kinds of objects and networks toward a service oriented architecture of the future Internet.” | |

| Internet of Things: Legal Perspectives [97] | “A world where physical objects are seamlessly integrated into the information network, and where the physical objects can become active participants in business processes. Services are available to interact with these ‘smart objects’ over the Internet, query their state and any information associated with them, taking into account security and privacy issues.” | |

| 2011 | IoT-A (“Internet of Things Architecture”) [58] | “It can be seen as an umbrella term for interconnected technologies, devices, objects and services.” |

| UK FISG (“Future Internet Report” ) [46] | “An evolving convergent Internet of things and services that is available anywhere, anytime as part of an all pervasive, omnipresent, socio–economic fabric, made up of converged services, shared data and an advanced wireless and fixed infrastructure linking people and machines to provide advanced services to business and citizens.” | |

| IoT-SRA [49] | “Things having identities and virtual personalities operating in smart spaces using intelligent interfaces to connect and communicate within social, environmental and user contexts.” “A world-wide network of interconnected objects uniquely addressable based on standard communication protocols.” | |

| The Internet of Things: In a Connected World of Smart Objects (Accenture & Bankinter Foundation of Innovation) [60] | “The Internet of Things (IoT) consists of things that are connected to the Internet, anytime, anywhere. In its most technical sense, it consists of integrating sensors and devices into everyday objects that are connected to the Internet over fixed and wireless networks. The fact that the Internet is present at the same time everywhere makes mass adoption of this technology more feasible. Given their size and cost, the sensors can easily be integrated into homes, workplaces and public places. In this way, any object can be connected and can ‘manifest itself’ over the Internet. Furthermore, in the IoT, any object can be a data source. This is beginning to transform the way we do business, the running of the public sector and the day to day life of millions of people.” |

Table 2

(Continued)

| Year | Body | Definition |

| China’s Initiative for the Internet of Things and Opportunities for Japanese Business [59] | “a system automatically recognizes information about a thing such as ‘unique attributes,‘ state at that ‘time’ and ‘location’ by using sensors and cameras connected to the Internet, and creates value added information by comprehensively analysing the state and location of two or more things. At the same time, the system uses such information to automatically control equipment and devices.” | |

| Architecting the Internet of Things [97] | “The future Internet of Things links uniquely identifiable things to their virtual representations in the Internet containing or linking to additional information on their identity, status, location or any other business, social or privately relevant information at a financial or non financial pay off that exceeds the efforts of information provisioning and offers information access to non predefined participants. The provided accurate and appropriate information may be accessed in the right quantity and condition, at the right time and place at the right price. The Internet of Things is not synonymous with ubiquitous/pervasive computing, the Internet Protocol (IP), communication technology, embedded devices, its applications, the Internet of People or the Intranet/Extranet of Things, yet it combines aspects and technologies of all of these approaches.” | |

| 6LoWPAN: The Wireless Embedded Internet [84] | Encompasses all the embedded devices and networks that are natively IP-enabled and Internet-connected, along with the Internet services monitoring and controlling those devices. | |

| Internet of Things: Global Technological and Societal Trends from Smart Environments and Spaces to Green ICT [73] | “The Internet of Things could be conceptually defined as a dynamic global network infrastructure with self configuring capabilities based on standard and interoperable communication protocols where physical and virtual ‘things’ have identities, physical attributes and virtual personalities, use intelligent interfaces and are seamlessly integrated into the information network.” | |

| 2012 | Arduino, Sensors, and the Cloud | “A global network infrastructure, linking physical and virtual objects using cloud computing, data capture and network communications. It allows devices to communicate with each other, access information on the Internet, store and retrieve data, and interact with users, creating smart, pervasive and always connected environments.” |

| 2013 | iCore [11] | “Our world is getting more and more connected. In the near future not only people will be connected through the Internet, but Internet connectivity will also be brought to billions of tangible objects, creating the Internet of Things (IoT).” |

| DLM [77] | “The Internet of Things is a web in which gadgets, machines, everyday products, devices and inanimate objects share information about themselves in new ways, in real time. Using a range of technologies such as embedded radio frequency identification (RFID) chips linked with IP addresses (internet signatures), near field communications, electronic product codes and GPS systems just about anything can be connected to a network. The connected objects can then be tracked and output information can be recorded, analysed and shared in countless ways via the Internet.” | |

| CISCOa | “the Internet of everything,” – “Bringing together people, process, data and things to make networked connections more relevant and valuable than ever before, turning information into actions that create new capabilities, richer experiences and unprecedented economic opportunity for businesses, individuals and countries.” | |

| 2014 | IEEE, “Internet of Things”b | A network of items – each embedded with sensors which are connected to the Internet. |

| NIST – The National Institute of Standards and Technology [70] | “Cyber physical systems (CPS) – sometimes referred to as the Internet of Things (IoT) – involves connecting smart devices and systems in diverse sectors like transportation, energy, manufacturing and healthcare in fundamentally new ways. Smart Cities/Communities are increasingly adopting CPS/IoT technologies to enhance the efficiency and sustainability of their operation and improve the quality of life. (NIST, “Global City Teams,” 2014)” | |

| OASIS (OASIS, “Open Protocols”) [58] | “System where the Internet is connected to the physical world via ubiquitous sensors.” | |

| IERC – IoT European Research Clusterc | “A dynamic global network infrastructure with self configuring capabilities based on standard and interoperable communication protocols where physical and virtual ‘things’ have identities, physical attributes and virtual personalities and use intelligent interfaces, and are seamlessly integrated into the information network”. | |

| HPd | “The Internet of Things refers to the unique identification and ‘Internetisation’ of everyday objects. This allows for human interaction and control of these ‘things’ from anywhere in the world, as well as device to device interaction without the need for human involvement.” |

Table 2

(Continued)

| Year | Body | Definition |

| 2017 | BCC Research LLCe | Internet of Things (IoT) is defined as a system of interconnected devices, machines, digital devices, objects, animals and/or humans, each provided with unique identifiers and with the ability to transfer data over a network that requires human-to-human or human-to-computer interaction. |

| IBMf | “The Internet of Things refers to the growing range of connected devices that send data across the Internet. A “thing” is any object with embedded electronics that can transfer data over a network – without any human interaction.” | |

| ARMg | “The Internet of Things (IoT) brings compute power to everyday objects and physical systems within homes, commercial buildings, and critical infrastructures. In doing so, it allows people and systems to gather unprecedented quantities of data, produce powerful insights, and make life safer, more efficient, and more connected than ever before.” | |

| INTELh | “The Internet of Things (IoT) is a robust network of devices, all embedded with electronics, software, and sensors that enable them to exchange and analyze data. The IoT has been transforming the way we live for nearly two decades, paving the way for responsive solutions, innovative products, efficient manufacturing, and ultimately, amazing new ways to do business.” |

a CISCO, “Internet of Everything,” http://www.cisco.com/web/about/ac79/innov/IoE.html.

b IEEE, The Institute, “Special Report: The Internet of Things.” http://theinstitute.ieee.org/static/specialIreportItheIinternetIofIthings.

c European Research Cluster on Internet of Things (IERC),“Internet of Things,” http://www.internetIofIthingsIresearch.eu/about_iot.htm.

d Miessler, Daniel, “HP Security and the Internet of Things,” 2014, http://h30499.www3.hp.com/t5/Fortify Application Security/HPISecurity and The Internet of Things/baIp/6450208.U9_M6dQsL2s.

e BBC Research report: https://www.bccresearch.com/market-research/information-technology/the-internet-of-things-IoT-in-energy-and-utility-applications-report-ift142a.html.

g ARM IOT: https://www.arm.com/markets/iot.

2.2.Internet-of-Things phases of development

Having introduced the Internet-of-Things, we will now investigate how the historical development of the Internet of-Things might be characterised into phases, each with their own characteristics. Our analysis is based on a study of over forty definitions and narratives from published literature during in the period 2005–2017 (a 12-year period). In order to to complete this task we analysed data using common keywords (Table 2) based on the nature, characteristics, functionalities, and capabilities of the Internet-of-Things. From our analysis we deduced it is possible to characterise its development into five distinct phases. The first phase, before 2005 was when the Internet-of-Things was in its infancy and work was largely exploratory and ad-hoc in nature; the remaining four phases, all post-2005, each comprise a 3-year period which are described in the following sections.

2.2.1.Phase one 2005–2008 (the devices & connectivity period)

The most frequent key phrases emerging from the study of this period were: “communication”, “network”, “interconnect”, “physical and virtual objects”, “things”, “identities”, and “computation”. Given that the pivotal ITU report [1] was published at the beginning of this phase, the IoT concept was viewed as being relatively new during this period. According to the ‘Internet World Stats’ organisation, between 15% and 24% of the world’s population were, at that time, connected to the Internet with their main activities being sending and receiving emails or using various repository services to discover information. Cloud Computing was in its infancy during this period since the term did not yet exist with such centralisation of computing and information being regarded as applications of client-server architectures. It was the time where the “Disappearing Computer” paradigm first emerged, most notably as part of an EU research funding programme [25]. Communities such as Ubiquitous and Pervasive Computing and Intelligent Environments/Ambient Intelligence were formed. The IoT concept in this period was essentially interpreted as “transforming everyday objects into embedded-computers”, to “provide the object with an identity” and “connect it to the Internet” (i.e. remote access and control). Technologies which typified this period were the Dallas Semiconductor’s Tini Board which was marketed as the world’s first commercial ‘emebedded-Internet’ device [29]. In the same period, the concept for ‘embedded-agents’ emerged which allowed decentralised ambient intelligence to be realised [18].

2.2.2.Phase two 2009–2011 (the machine-to-machine period)

Between 2009 and 2011, industries and academics started to realise the Internet-of-Things’s potential with a surge on attempts to develop and apply the concept. In our study of this period, several new key phrases emerged: “infrastructure”, “information”, “data”, “services”, “captures”, “sense”, “physical and virtual”, “communication”, “interoperability”, “seamless integration”, “seamless communication”, “processes”, “autonomously”, and “controlled remotely” This period saw technological platforms gradually improved to support the core functionality of the Internet-of-Things. Networks and standards were created to support the various modes of communication involved [43,44,51]. One of these modes of communication, Machine-to-Machine (M2M), was adopted as the basis for the Industrial Internet-of-Things, which was of such importance that it has been used to categorise this phase. During this period there was a shift of focus away from the hardware and connectivity issues of phase one, to software, data, information and services. An increased emphasis on processing capability and remote control were also observed. The concept of the Internet-of-Things began to take off more rapidly towards the end of this period.

2.2.3.Phase three 2012–2014 (the HCI period)

Between 2012 and 2014, technology continued to advance, further accelerating the commercial adoption of the IoT concept. Examples of such technological developments included (a) object identification (e.g. Electronic Product Codes (EPCs) [16], and IPv6 [68,91]), and (b) network connectivity (e.g. wireless communication, low energy consumption and cloud computing [78,85]). Significant developments occurred in the area of HCI. For example, End-User Programming paradigms began to attract attention to address the needs for empowering users in this digital revolution [30,67,87]. In addition to earlier key watchwords, the most frequent new phrases uncovered in this phase were: “human”, “interaction”, “smart”, “bringing people, process, data and things together”, “connected”, and “improve quality”. From these it is deduced that the Internet-of-Things concept had evolved from information and services (of phase two) to include users. The vision to interconnect what had hitherto been separate silo systems was also beginning to emerge, as well as users empowerment through paradigms such as Pervasive-interactive-Programming (PiP) which enabled end users not only to assemble hardware, but to program the collaborative software functionality of such systems, which was a key aspect of making them personalised and smarter [30].

2.2.4.Phase four 2015 –2017 (the smart period)

Between 2015 to 2017, global technology players (such as Cisco, ARM, Intel, Amazon) begun to position themselves and launched products aimed at generating revenue from the Internet-of-Things. The resulting increase in numbers of Internet connected devices, together with the high value of data generated from their usage, gave rise to new business opportunities that exploited this new source of big-data. Thus, big data, analytics and Intelligence were the common themes in literature covering this period [87]. Some new common key phrases encountered were: “commercial”, “products”, “insights”, “analyse”, “big-data”, “smart”, “safer”, and “efficient”. It was also observed that the IoT concept shifted from the information, services and users (in phase three) to massive systems integration. This period involved utilising Artificial Intelligence to process information, make decisions, and create an impact on people’s lives (i.e. data-analytics and Machine Learning), plus the emergence of the System of Systems concept (i.e. a way that collections of Internet-of-Things components can pool their capabilities to deliver higher-level functionalities.

2.3.Internet-of-Things characteristics and classifications

Just as the scope of the Internet-of-Things has changed down the years, so too do the features that characterise it. In its early days the Internet-of-Things was characterised, in general terms, by what was referred to as the five “C”s:

Convergence – any ‘thing’, any device

Computation – anytime, always on

Collection – any data, any service

Communication – any path, any network

Connectivity – any place, any where

Later, these general characteristics evolved to include details to reflect the logical functions of IoT, in particular [1]:

Entity-based concept (physical and virtual objects)

Distributed execution (design and processing)

Interactions (machine and users)

Distributed data (storage and portability)

Scalability (infrastructure)

Abstraction (rapid prototyping)

Availability (networks)

Fault tolerance (user-friendliness)

Event-based (modular architecture)

Works in real time (speed and performance)

While a view of the logical functions of the Internet-of-Things characteristics provides a useful summary, it does not reflect well the impact and benefits that the concept offers. For example, it does not capture the ability of Internet-of-Things systems to process large quantities of data and to infer high value information or knowledge which enable smartness, by supporting effective decision-making. Today’s view of the Internet-of-Things, especially from an industry perspective, is very much one of a network of ‘systems of systems’. In this context, the characteristics of a modern Internet-of-Things system can be summarised better as comprising:

devices (including physical or virtual, power, processing)

data capture (including sensing and data exchange)

communications (including network connectivity, protocols, authentication and encryption)

analysis (including big data analytics, AI and machine learning)

information (including insightful forecasts and predictions)

value (including operational efficiency, improvement in performance)

3.Generations of Internet-of-Things: Tangible physical objects

As described in the previous section, a current Internet-of-Things eco-system spans factors which range from hardware through communication, storage, analytics, and decision-making process to the provision of value. In this section, we aim to describe some of the pioneering Internet-of-Things devices that were developed prior, and up to, the ITU-UN report published in 2005 [1]. For the purpose of this paper, we have only considered physical IoT devices classifying them into 4 generations:

First Generation (1980s)

Second Generation (1990s)

Third Generation (2000s)

Fourth Generation (2010s)

In doing this we considered IoT devices as having the following eight characteristics:

Sensing (S)

Processing (P)

Connectivity (C)

Context-Awareness (CA)

Internet (I)

Internet Controlled (IC)

Mobile Controlled (MC)

Intelligence,self-configuring,self-monitoring (Int)

Table 3 lists some of the most prominent Internet-of-Things devices developed on or before 2005 which was the most intensive and open research period which is argued to have shaped and defined today’s more commercial Internet-of-Things market. Our research showed that a total of 11 devices were developed in this period and the vast majority of them were inspired by everyday objects: from smart platform shoes, developed in 1985 (first generation), to a table, developed in 2004 (third generation). These early Internet-of-Things devices exhibited between 1 to 5 characteristics we considered (listed above), apart from one, TESA (plant care device) developed in 2003, which included 7 out 8 these characteristics. Currently, Internet-of-Things devices are widely available on the market providing an end-to-end solution to users, including functionalities such as sensing, monitoring, and decision-making and any attempt to draw up a list would be fruitless, since its large and commercially oriented. Thus, we omit listing IoT devices developed from 2006 onwards.

Table 3

A list of historic IoT devices

| Device name | Group | Year | S | P | C | CW | Int | I | IC | MC | Objects |

| Shoes (The Eudaemonic Pie) | Thomas A. Bass | 1985 | N | Y | N | N | N | N | N | N | Platform shoe |

| Toaster | John Romkey | 1990 | N | Y | Y | N | N | Y | Y | N | Toaster |

| Coca Cola machine (developed in 1980s) | located at the Carnegie Melon University | 1990–1992 | Y | Y | Y | N | Y | Y | N | N | Coca Cola vending machine |

| The Active Badge Location System | Roy Want1, Andy Hopper2, Veronica Falcão3 and Jonathan Gibbons4 | 1992 | Y | N | Y | N | N | Y | N | N | Badge |

| Smart clothing | Steve Mann | 1996 | N | N | Y | N | Y | Y | N | N | Camera + glasses |

| MediaCup | Hans-W. Gellersen, Michael Beigl, and Holger Krull | 1999 | Y | Y | Y | Y | N | N | N | N | Cup |

| Wearable sensor badge and sensor jacket for context awareness | J. FarringdonPhilips Res. Labs., Redhill, UK, et al. | 1999 | Y | Y | N | Y | N | N | N | N | Garment |

| Internet Digital DIOS | LG | 2000 | Y | Y | Y | N | N | Y | N | N | Fridge |

| TESA | J. Chin & V. Callaghan | 2003 | Y | Y | Y | N | Y | Y | Y | Y | Glass box |

| Intelligent Spoona | MIT – Connie Cheng and Leonardo Bonanni | 2003 | Y | Y | N | N | N | N | N | N | Spoon |

| The Drift Table | William W. Gaver et al. | 2004 | Y | Y | N | Y | N | N | N | N | Table |

4.Some illustrative cases studies

As was discussed earlier in this paper, the Internet-of-Things can be characterized as being an application that makes use of one or more relatively small inexpensive networked computers equipped with sensors and/or actuators that are managed by people and/or software process supporting a wide range of activities. Typically, the science supporting Internet-of-Things systems involves embedded-computing, the Cloud, software engineering, distributed computing, AI and HCI. The aim in writing this section is to provide an empirical (and informal) insight into the historical development of some Internet-of-Things platforms which we hope will be of interest to those working in this area in the modern era.

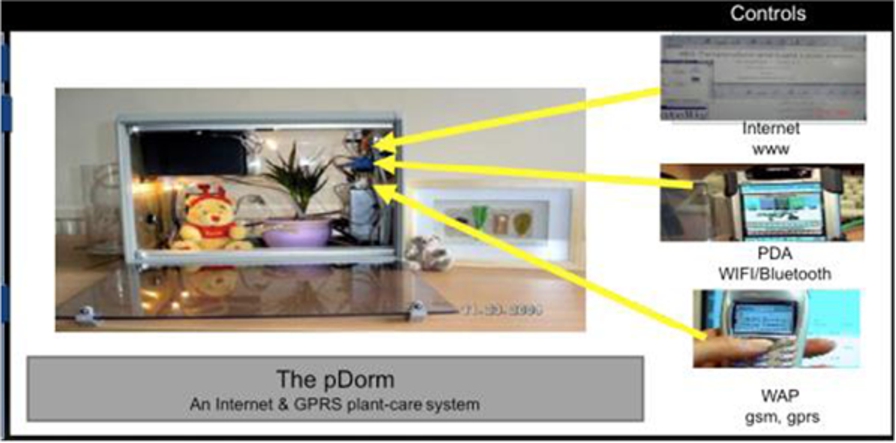

Fig. 2.

The pDorm.

4.1.PDorm (plant-dorimtory)

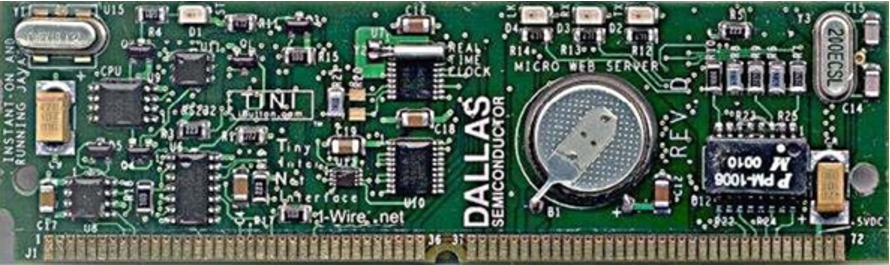

The pDorm (aka TESA – Towards Embedded-Internet System Applications), shown in Fig. 2, was one of the earliest examples of an Internet-of-Things application. [29] Developed in 2003, it took the form of a novel “botanical plant care” appliance, which explored the feasibility of applying the then, newly emerging low-cost Embedded-Internet devices to create a novel generation of products that could be accessed and controlled from anywhere, anytime, via a web-based interface. The principal challenges addressed by TESA were how to design an Internet-of-Things computing architecture that supported appliance control, a multimode heterogeneous client interface, and mixed wired and wireless communication (including access via mobile phone, before the era of smart phones). The system was presented in a custom-made box consisting of various lighting (top and bottom), a heater, a fan, a temperature and moisture sensor, attached to an embedded-internet board called TINI, manufactured by Dallas Semiconductor (Fig. 3). TESA supported wired (Ethernet) and wireless (Bluetooth and WIFI) communications over an IP network and could be accessed via 3 different interfaces, all with different resolutions which auto-triggered according to the client device’s screen resolution.

Fig. 3.

TINI board.

Programming Internet-of-Things systems at that time was the biggest challenge, due to a lack of out-of-the-box tools as technologies were constantly being refined, improved and updated. Developers and users had little choice but to work round various constraints. The major design issues faced in completing this project were:

Lack of standards (reducing availability of off-the-shelf components)

Lack of primitive tools (increasing the need to design everything from the bottom up)

Limited scalability

Limited economies of scale (making system more expensive)

Lack of crowd based communities (reducing the level of support available)

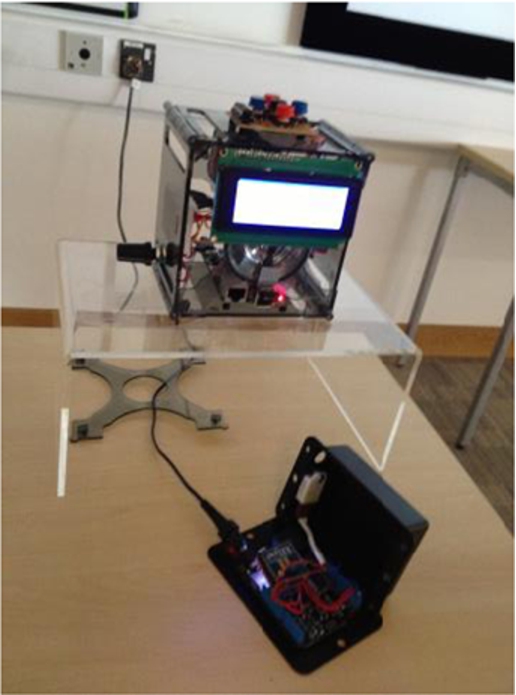

4.2.The smart alarm clock

This project, ‘The Smart Alarm Clock’ (Fig. 4), was undertaken in 2013, some 10 years after the development of pDorm, and provides a good insight into how technology had changed, and the trends that were emerging as the Internet-of-Things moved forward. The Smart Alarm Clock was developed by Scott [83] who had identified that there wasn’t a commercially available smart alarm clock, with the functionality to dynamically and autonomously adjust alarm times based on weather and traffic conditions. Examples of the more advanced Internet clock products at the time included the La Crosse WE-8115U-S Atomic Digital Clock, which featured indoor/outdoor temperature and humidity readings, and the Dynamically Programmable Alarm Clock (DPAC), designed by students at Northeastern University in Boston, MA, which was a self-setting alarm clock, that used Google Calendar appointments to set alarm times and automatically adjusted them based on current traffic and/or weather conditions. However, while many of these products sought to use external data, none had fully exploited the potential for real-time Web services that ranged from conventional gathering of data from web-feeds through to accessing Internet-of-Things environment sensors that may be part of private or public spaces.

Fig. 4.

The smart alarm clock prototype.

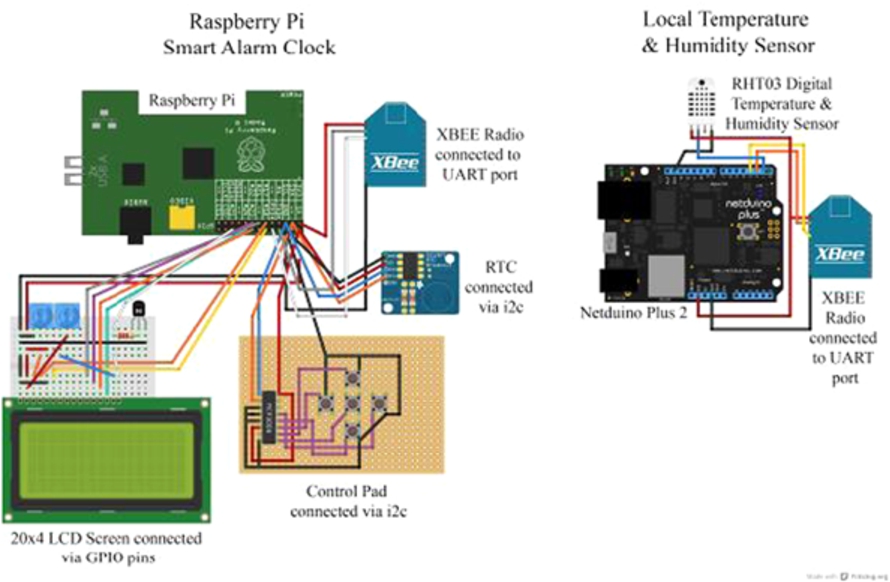

Fig. 5.

Connection diagrams of the smart alarm clock.

Thus the concept of the Smart Alarm Clock (Fig. 5) was developed with distinguishing features that included rule processing, local sensor readings and integration with web services which was integrated into a single unit, that harnessed the full power of the Internet (including the Internet-of-Things) to determine the optimal alarm time for its owner to be awakened in order to reach their predetermined location at the right time. The alarm time adjustment was, for example, dependent on the severity of traffic conditions, weather forecast and actual local sensing.

Fig. 6.

The BReal set up with an ImmersaVU station being used with a set of Raspberry Pi based Internet-of-Things smart objects.

For instance, readings from the local temperature sensor were used to further adjust the alarm time to allow time for motorists to de-ice their vehicles, if necessary. Since some 10 years had passed from the development of the pDorm, many of the issues faced back then, such as a lack of standard low-cost platforms had been overcome with the advent of hardware such as the Arduino and Raspberry Pi, which had a substantial crowd of users and off-the-shelf peripherals.

In this case the project was built using a Raspberry Pi and was based on XBEE wireless radios networks (low-powered data transmission with a well-documented API). In the 10 years since the pDorm, programming support had also improved with, for example, developers’ forums dedicated to the particular platform being available. These forums allowed groups of similar-minded individuals to form their own communities, where they shared their expertise, ideas and experiences. The major design challenges faced in this project were:

Choosing the best Internet-of-Things platform for the application from the myriad offering available.

Choosing the development tools for rapid prototyping (somewhat linked to the choice of platform)

Choosing the crowd to be part of (this can be a balance between support from large crowds and innovation from newer products with less users)

Provision of some user customisation (a trend that had grown since the earlier pDorm product)

4.3.BReal (a blended reality approach to the Internet-of-Things)

The Internet-of-Things does not stand alone as an innovation but, rather co-exists with other emerging technologies, one being virtual or mixed reality. Virtual reality shares many similarities with the Internet-of-Things in that both provide network components that are used as the building blocks of inhabitable worlds. Moreover, Internet-of-Things devices can have virtual representations, allowing them to exist in both the real and virtual world. Further it is possible to build worlds where some of the Internet-of-Things components are real, and some are virtual. Such environments are called Mixed Reality. Such a hybrid Internet-of-Things environment was built in the University of Essex during the phase four of the historical development of the Internet described earlier (i.e. 2015–2017, the smart period).

The project was called BReal which was an amalgamation of letters from ‘Blended Reality’ [76]. The environment consisted of 3 main parts: (i) the physical world, where the user and the xReality22 objects are situated; (ii) the virtual world, where the real world data will be reflected using the virtual object; and (iii) a human-computer interface (HCI) which captures the data obtained in real-time via the xReality object, processing it so it can be mirrored by its virtual object and thereby linking both worlds. Figure 6 shows the BReal set up which consisted of an ImmersaVU station running Unity (the VR environment), a set of Raspberry Pi based Internet-Of-Things smart objects.

To mirror and synchronize virtual representations the system used a Smart Fox Server X2, a middleware that is more often used to create large scale multiplayer games and virtual communities.

The major design challenges faced in this project were:

Devising computational paradigms and mechanisms to enable Internet-of-Things devices to become smart-objects

Creating visual representations and simulations of Internet-of-Things objects

Maintaining real-time synchronisation between the real and virtual Internet-of-Things objects (test were conducted between countries separated by many thousands of miles).

While the technical challenges facing this project were considerable, the potential benefits were also enormous. For example, using this approach it is possible to develop and experiment with innovative Internet-of-Things designs ahead of any expenditure on manufacturing and deploying real devices. Also, for developing new Internet-of-Things systems, the collaborating developers can be geographically separated, which is particularly useful for large multinational companies where team members may be distributed around the world. In addition, with the current trend towards centralising Internet-of-Things services on cloud-based architectures (e.g., data analytics, management etc.) the approach is highly compatible with such schemes. Finally, it is worth noting that the core of the BReal innovative vision arose from the Science-Fiction Prototyping methodology described in the introduction of this paper. A Science Fiction prototype called “Tales from a Pod” was written that described students in a future time using Virtual-Reality and the Internet-of-Things in a futuristic learning environment that became the inspiration for this work [19]. The sheer diversity of Internet-of-Things devices and functionalities makes innovation both challenging and exciting since the possibilities are almost endless. Thus, marrying the Internet-of-Things with a powerful innovation tool, such as Science Fiction Prototyping makes a powerful combination. One outcome of this project is that one of the members of the BReal team is now introducing related techniques as a means of supporting BT field engineers to maintain the vast UK telecommunication infrastructure.

5.Internet-of-Things in user-centred and smart environments perspective

The above mini case descriptions were offered as a snapshot of student level projects in the Internet-of-Things area with the intention of giving the reader a feel for the historical issues involved in the design and development of Internet-of-Things systems, from a practitioner’s perspective. In the following sections we will move the discussion forward by providing some conceptual background for different approaches used within an Internet-of-Things smart environment context.

5.1.Customising IoT environments: A user-centred approach

While it is good achievement to present society with transformative technologies, such as the Internet-of-Things, it is also necessary to provide support for people so they can harness these technologies to their benefit. A particularly difficult, but important challenge concerns the development of mechanisms to enable users to customise their Internet-of-Things spaces and services. Currently there are three principal approaches for users: (a) let others do it for you (e.g. commercial companies), (b) customise the product oneself through suitable end-user tools or, finally (c) employ some form of Artificial Intelligence and let the systems do it for you. In this section we will discuss these approaches, illustrating them through examples of research projects.

5.2.User centric dimensions of the Internet-of-Things

User-centric approaches, as the name suggests, puts matters relating to the user at the heart of the process under consideration, in this case the design of Internet-of-Things products. Behavioural research has shown that the underlying motives driving human behaviour change little over time, despite the rapid advances in enabling technologies and the modes of provision. As DiDuca explained, “people will live as they have always lived in an (Internet-of-Things) environment, therefore the technology will have to adapt to them rather than designers relying on users’ having to become familiar with the technology in order to fulfil a need that they have” [40]. For example, people always want to communicate, whether it is in-person, via phone, SMS, email, social media or using some yet to be invented technology. This is a very helpful observation since it allows for the creation of innovative propositions based on core human desires and to ensure technology delivers what people truly need. This principle of putting people’s likes, desires and behaviours at the focal point of product research is the core principle in user-centric design which emerged in early 1990’s with work such as Jordan’s [63] Pleasures Framework, and Sanders’ [82] Experience Design approach. With regards to the Internet-of-Things, these ideas led to Chin’s Pervasive-Interactive-Programming paradigm (the first example of programming-by-example being applied to Internet-of-Things in a physical environment) which transformed users from passive into active designers of innovative “products”. Placing users at the core of the design process goes beyond simply allowing users to create highly personalised services (the products of their creation) but, to some extent, removes some of the ‘black-box’ mystic of technology and much of the technology-phobia (e.g. lack of understanding, loss of control, and compromising privacy) by making users stakeholders in Internet-of-Things product design. Given the pervasive nature of the Internet-of-Things, with billions of devices in the world and potentially hundreds in our own living space, these are important considerations for those who would like to see technology deliver its full potential to society whilst preserving the rights and freedoms of individuals [24]. Inevitably this raises issues relating to the balance of autonomy and control enjoyed by people and technology; for example the extent of control allowed to Artificial Intelligence versus the individual. These issues are discussed in the following sections.

5.3.Pervasive End-User Programming

Programming is an essential activity in creating Internet-of-Things applications. While hardware can often be purchased off-the-shelf, programming is difficult to avoid. One of the techniques that can come to the aid of would-be programmers of the Internet-of-Things, especially people with weak programming skills, is End-User Programming. The technique is characterised by the use of a combination of methods that allow end-users of an application to create “programs” without needing to write any code [23].

Examples of such approaches include using a jigsaw, a metaphor [54] that enabled novice programmers to snap together puzzle-like graphical representations of program constructs presented to users on a range of devices including smartphones [9]. Another example is Media Cubes [53] which creates a tangible interface in which users manipulate iconic physical objects (representations) to build context-aware Internet-of-Things-based applications. A technique that dispensed with any kind of representation in favour of demonstrating the required behaviour by directly interacting with Internet-of-Things gadgets, has emerged which is called by various names including ‘Programming-by-Example’ or ‘Programming-by-Demonstration’ [33]. It functions by reducing the gap between the user requirements and the delivered program functionality by merging the two tasks. These ideas are closely related to visual programming languages such as Scratch and Alice which have become popular simplified programming tools for children.

Another technique: ‘Pervasive-Interactive-Programming’ (PiP), derived from ‘End-User Programming’, aimed to create an intuitive programming platform that utilised the user’s target physical environment, with appropriate GUI support, to empower end-users to create programs that customised collections of Internet-of-Things devices (e.g. to behave in ways their owners wanted, without requiring any detailed technical knowledge or writing any code). In comparison to the case studies presented in the previous (Section 4.1, the pDorm), this project also addressed the programming of the functionality of a box, in this case a much large one, a building or more specifically a smart home.

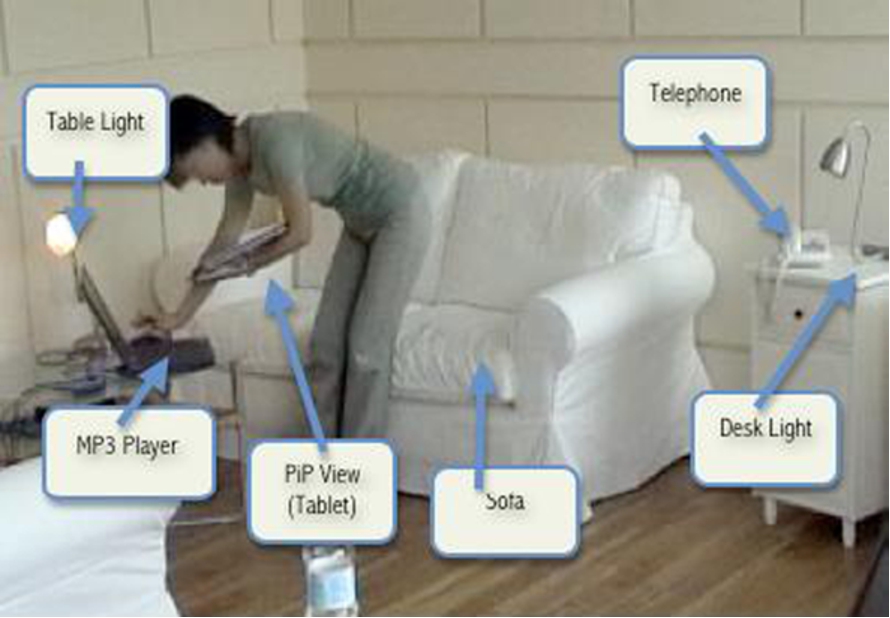

Fig. 7.

PiP being used to configure an Internet-of-Things enabled dometic environment.

Figure 7 shows a picture of a person using PiP to configure an Internet-of-Things enabled domestic environment. In this instance the person is creating a set of rules that govern the behaviours that occur when the phone rings while they are sitting on the settee watching a streamed movie, possibly in the evening with low lighting. In this case the user is trying to set environment actions which respond to an incoming telephone call by raising the light level, pausing the video stream, and thereby allowing the occupant to deal with the incoming call. The difference to the earlier cases is that this project is dealing with a orchestrating the functionality of a collection of Internet-of-Things devices (a distributed set of embedded-computing devices), rather than that of a single device. The result of this programming is a rule-based object called a MAp (meta-application) that can be shared or traded with the wider crowd of PiP users. This is an example of the emerging areas of smart-homes and smart-cities. Programming distributed computers has been traditionally seen as more difficult than programming a single computer, so this project is a good illustration of how programming the Internet-of-Things can be simplified to the level that non-technical users can generate creative designs. With the aid of AI and machine learning techniques, the approach can be enhanced with respect to learning the users’ behaviour while reducing the cognitive load, and personalising the environment.

5.4.Harnessing artificial intelligence

We know from our own experience of life that intelligence is a continuum ranging from dumb to smart. The same is true for populations of Internet-of-Things devices where some are more capable than others. In life, we all want to be the smartest but in the world of technology, people can have strong views about how intelligent they want their technology to be. In the extreme, advocates of a technological singularity warn of super-intelligent robots emerging that dispense with their human creators [27] versus more positive voices which see artificial intelligence as enhancing the quality of our lives by removing the cognitive loads required to deal with technology (e.g. simplifying interaction with technology) or enhancing our reasoning and decision-making capabilities [21].

In the Internet-of-Things world, Artificial Intelligence is applied at two levels; one is concerned with controlling individual devices (e.g. embedded-agents) while the other harnesses the data accumulated from populations of devices (e.g. big-data). In the big data world, Artificial Intelligence is applied in a form of machine learning to harnessing data generated by individual devices, to learn users’ behaviours so as to provide a personalised experience to them. An example of such work is the recent Anglia Ruskin’s Hyperlocal Rainfall Project, funded by UK government (and partnered with industry), which sought to harness environmental sensor information combined with users’ cycling data to provide highly personalised route recommendations to the users. The focus of the project was to encourage more users to take up greener modes of transport by providing accurate localised (and personalised) weather and route recommendations, via a mobile app. The project expanded from its initial target of one city to cover the whole of the UK.

Concerning the use of Artificial Intelligence within individual devices, they use an approach called embedded-agents. This is a concept proposed in the late 90’s by one of the authors, Callaghan, who devised an approach that allowed meaningful amounts of intelligence to be integrated into computationally small devices. Essentially, he observed that both robots and seemingly static Internet-of-Things devices were both moving within a similar sensory space and the techniques, behaviour based Artificial Intelligence, that endowed mobile robots with robust real-time performance compact enough to work in Internet-of-Things devices (as against using the massive computational resources of cloud servers) [26].

5.5.Intelligent agents and adjustable autonomy

Given the potential for ‘AI-Phobia’, and its effect on commercialising Internet-of-Things applications, some years ago British Telecom (UK) commissioned research to understand people’s attitude to the role of intelligent devices in their customer’s lives. The study involved creating special smart (intelligent) Internet-of-Things devices that, in effect, had a knob on them which allowed the level of device intelligence or smartness to be set, much like you might set the volume of a hi-fi system or the temperature of a home. Typically, intelligence (in machines) is seen as comprising elements of reasoning, planning and learning.

Learning is an especially powerful element of artificial intelligence, since it enables a system to learn and improve its own performance, without human assistance (ultimately, enabling autonomous self-programming systems). The BT study, chose to investigate this topic through the concept of machine-autonomy which broadly concerned how independently of users, the technology might operate [8]. They hypothesized that there were various reasons that people may want to vary the intelligence or the amount of autonomy of their Internet-of-Things systems. For example, the amount of control a person wanted to seed to Artificial Intelligence might depend on a person’s mental or physical state (which may vary according to context, mood, age, health, ability etc.). For example, as the previous section on End-User Programming argued, since people are intrinsically creative beings, there is a possibility that too much computerisation might undermine this pleasurable aspect of life. Other reasons they hypnotised on included the shortcomings of Artificial Intelligence to accurately predict a person’s intentions (people may not always want to do what they did previously) and, of course, when predictive Artificial Intelligence makes mistakes, it can be very annoying! Finally, they posited on various surveys which suggested that people were fearful of too much intelligence and have a strong desire to remain in control [7]. The work sought to explore these hypotheses by conducting a study in the University of Essex iSpace, a purpose built experimental IoT environment that has been built in the form of a two bed-roomed apartment, see Fig. 8.

Fig. 8.

The essex iSpace.

The aim of the study was to gain an understanding of people’s opinions relating to how smart Internet-of-Things devices should be. The results produced findings which, at first glance were intuitive in that, the more “personal” an Internet-of-Things function was, the more the participants needed direct control over it whereas the more “shared” an Internet-of-Things function was, the less control they required. Thus, for example, participants wanted explicit control of their entertainment system but were happy to delegate climate control to Artificial Intelligence. When the results were explored in greater depth it was clear that people’s reasoning was more complex with some of the participants displaying a mental risk-versus-benefits calculation of their decisions to use any particular function. As explained earlier Artificial Intelligence is not perfect and is error prone. The cost of errors can vary from being just a mild irritation (e.g. in the case of the temperature being slightly wrong), to severely annoying (e.g. where the agent made a wrong choice of music). These findings were consistent with those of other researchers and offered an important lesson to Internet-of-Things system designers that, if Artificial Intelligence technology is to be utilised in Internet-of-Things applications, it should not undermine the users control or compromise their privacy. While the initial aim of the ‘Adjustable Autonomy’ work was to provide a mechanism to study the use of Artificial Intelligence in the Internet-of-Things, the ability to adjust the amount of intelligence an Internet-of-Things gadget or system offers was considered by users to be a desirable feature, and therefore a commercial asset to companies. However, this paradigm has yet to surface in the commercial marketplace which is showing a marked tendency to move away from distributed and localised control, to centralised systems and control. Clearly this is a complex topic and such a short section cannot adequately discuss the issues; thus, interested readers are referred to other papers from the authors and other that describe the methodology and studies in much greater detail [6,8,62].

5.6.Trust, privacy and security

The recent (2018) revelation that the UK’s Cambridge Analytica was able to harvest and exploit 50 million Facebook profiles, together with the earlier 2013 disclosure that the USA’s National Security Agency were running a programme of global surveillance of foreign and U.S. citizens, made the public and politicians aware of the risks that Internet-based technologies posed to society. Even the inventor of the World-Wide-Web, Sir Tim Berners-Lee, has joined the voices of concern saying “Humanity connected by technology on the web is functioning in a dystopian way” advocating the need to “continue fighting to keep the Internet open and free” which he believes can be addressed by stakeholders signing up to a “Contract for the Web” which he hopes will be available in 2019 [12], Furthermore he makes a plea to “decentralise the web” explaining “It was designed as a decentralised system, but now everyone is on platforms like Facebook” which can have a polarising effect that threatens democracy itself. These concerns are, of course, not new as many years earlier, there were reports from the European Parliament Technology Assessment unit [42] and the UK Information Commissioner’s Office [55] which highlighted these susceptibilities and the consequent need for debate on how society should balance the convenience that new technology affords with the need to preserve privacy. Indeed, from the outset, the Internet-of-Things community had raised such concerns themselves, taking these issues to the United Nations Habitat, World Urban Forum, explaining the risks to privacy that networked technologies such as the Internet-of-Things, pervasive computing, ubiquitous computing, and intelligent environments posed to the citizen or government, advocating the need for international regulation [34]. Sadly, no significant debate occurred (non that lead to regulatory changes) until the highly published transgressions of people’s privacy reported above surfaced. Before the Facebook Cambridge-Analytica debacle, most of the debate addressed the more visible aspects of technology and privacy such as surveillance cameras, identity or loyalty cards, Internet search engines and RFID tags. However, since then the debate has advanced, driven by the rising commercial interest in technologies like artificial intelligence and Big-Data. While the Internet-of-Things is not centre stage in this debate, given Internet-of-Things device deployment is in the order of billions, including our own homes and stretching out to critical services (e.g. hospitals, utility companies, defence), they are key players in any future privacy and security considerations. The risks to Internet-of-Things systems are many-folds, ranging from unauthorised access (and malicious activity) to privacy abuse of the Internet-of-Things generated data (e.g. monitoring and disclosure of private behaviours). Beyond this there are issues relating to Artificial Intelligence which is both embedded into Internet-of-Things devices and used within centralised analytical engines. Beyond the ‘here and now’ there are somewhat futuristic (and controversial) discussions about a potential technological singularity (that Artificial Intelligence developments may lead to machines be smarter than humans) through the massive distribution of embedded Artificial Intelligence into Internet-of-Things devices. In addressing these issues, many researchers argue we are caught in the paradox that in order to be useful, the Internet-of-Things sensors have to collect data, but once ‘the system’ knows, others can know too i.e., there is a direct threat to our privacy. The obvious solution is to introduce careful planning, design and regulation of the Internet-of-Things market which, due to its highly dynamic nature, is very challenging to governments, meaning that legislation inevitably trails technology, leaving the public at risk to having their trust, privacy and security compromised from time to time. Sir Tim Berners-Lee ‘Contract for the Web’ [12] would seem like an excellent start on the path to addressing these issues that aim to protect people’s rights and freedoms on the internet. This is particularly pertinent to this discussion as the web, in the form of web-appliances and embedded-web servers, is another mechanism that is used to create Internet-of-Things architectures [45]. In addition, many of Tim Berners-Lee’s concerns also relate directly to the management of the Internet and hence the Internet-of-Things, since the two technologies are interdependent. Clearly, not addressing these issues is unthinkable as, with unfettered commercial development of the Internet-of-Things, society risks creating a modern equivalent of Bentham’s Panopticon [88] exposing people to a form of “Big Brother” society [24] where some parties can monitor our every move which is probably not the kind of society most ordinary people would like to see IoT developments lead to. Thus, while the Internet-of-Things promises great benefits to society, without prudent oversight it raises significant new dangers for individuals and society as a whole. As researchers, we have an important role to play in ensuring technology in a morally and ethically responsible way, as work by Augusto et-al. [6] and Jones et-al. [62] most effectively illustrates.

5.7.Adoption, acceptance and appropriation of new technology

The relationship between human behaviour and technology can be viewed from different perspectives. For instance, from the sociological perspective, one looks at the use of technology and its effects on society [50,71], from the social-psychological perspective, one mainly looks at explanatory factors of technology use at the individual level [38,93], in the socio-cultural perspective, social constructivism plays a major role [13,72] and people and technology co-construct, and from the philosophical perspective, human-technology relationships are examined [56]. All these perspectives provide a specific and valuable contribution to our understanding of the relationship between human behaviour and technology.

5.7.1.Adoption

In his diffusion of innovations theory [81,92], describes the process of diffusion of a new innovation (an object, idea, practice or service) within a social system from a sociological perspective. New innovations entail uncertainties, because the outcomes of the adopted innovation are not known in advance. He argues, people are motivated to search for both objective and subjective information about this innovation. The diffusion research focuses on various elements, such as:

the causes of the spread, namely the innovativeness of societies and cultures

the characteristics of the innovation itself

the decision-making process of individuals when they consider adopting an innovation

the characteristics of individuals who may adopt an innovation

the consequences for individuals and social system (or society) that adopt the innovation

the communication channels that are used in the adoption process [96].

We argue that the entire adoption process is not only focused on the last step of the decision-making process (the final decision), but on the entire decision-making process. This includes the exploration of and knowledge about the innovation, awareness of the innovation, the attitude and intention to adopt, the considerations and eventually the decision-making. In practice, we often see that the adoption process of innovations is reduced to adoption in the narrow sense, namely only the last step of the decision-making process: shall we, as an individual (or organisation), adopt or not adopt? In those cases, other important aspects of the adoption process are often lacking. As a result, the choices on which the decision is based are only partially substantiated. This is one of the reasons why both individuals and organisations often do not know how to deal with new technology and how to embed them in a given context.

In recent years, the adoption and diffusion research has been strongly dominated from the perspective of management information science, where the focus lies on the use of technology acceptance models [38,93] to determine the probability of adoption by individuals [96]. And even though Rogers’ diffusion of innovations theory is comprehensive and originally intended to investigate all kinds of innovations in society as a whole, the rise of computers has given the diffusion research an organisational embedding. A construct such as facilitating conditions in the unified theory of acceptance and use of technology (UTAUT) model [17,94] shines light on this organisational embedding. This construct indicates the extent to which an individual thinks a technical infrastructure exists in his or her organisation that can support the use of a new technology.

5.7.2.Acceptance

In the above section, the adoption was regarded as new technology at the individual level. But historically, much research on technology acceptance is being conducted within an organisational context, because that is where many and great innovations are introduced. Different perspectives describe the acceptance process of technology within organisations, namely the organisational perspective, the technological perspective, the economic perspective and the (psychological) user perspective [15].

The organisational perspective is characterised by factors related to the nature and environment of the organisation. This includes factors such as the environment, structure and culture of an organisation, but also to organisational processes and the vision of strategy and policy. All these factors influence how organisations deal with the acceptance process when they use new technology or want to start using it. The technological perspective focuses on the interaction that takes place between technology and organisation. This especially applies to technology in the sense of enabler of organisational processes; technology that supports redesigning or modifying organisational processes [15]. The third, economic perspective focuses on the costs and benefits associated with the acceptance process of technology. The (psychological) user perspective, finally, focuses on the social-psychological aspects of technology choices, and on the influence of these choices. By focusing on a particular perspective in the various phases of technology acceptance, more insight can be gained in that area. In this context, [52] speak of a four-phase model of ICT diffusion in organisations, with the phases adoption, implementation, use, and effects. Following [15,81] also equate adoption with the phase of exploration, research, consideration and decision-making to bring a new innovation into the organisation [5].

Technology acceptance covers the process that begins with becoming aware of a new technology and ends with incorporating the use of that technology in one’s daily life [52]. This implies the acceptance process is wider and includes multiple phases instead of only the adoption process. In addition, it is not only related to the phases of adoption, but also to the phases of implementation, the use and the effects. The acceptance process of new technology, like the adoption process, mainly takes place on the cognitive level. Finally, in the appropriation process, the cognitive and affective aspect come together for the user of new technology. Appropriation of new technology starts with a positive adoption process that results in an implementation process in which (long-term) use of that technology produces certain effects that, in turn, impact the different contexts in which an individual moves.

5.7.3.Appropriation

When technology acceptance has taken place, the actual use of the technology may cause people to start using the technology differently than was intended by the designers. This is a reconstruction of the technology: People appropriate the technology. Within the perspective of mutual shaping of technology, there are several approaches, such as the social construction of technology [75], semiotics [4,100] and the domestication approach [69,86]. These approaches share the belief that both the technology and its users influence each other. It is emphasised [14] that the crucial contribution of the mutual shaping of technology is not “that every user’s reconstruction should always be analytically deconstructed, but that anyone could be deconstructed if necessary”. Once people have accepted the technology and thereby have gone through the phases of adoption, implementation, use and effects, another phase can be added to the technology-acceptance process. Technology appropriation arises, because people include technology in their daily use, and because people not only form the use of technology to their wishes, routines and activities (and thus, their behaviour), but the technology also forms itself to its users. During technology appropriation, a user more or less takes possession of the technology. Poole and DeSanctis describe technology appropriation as “the process of users altering a system as they use it” [76]. This [71] has been taken further and indicates that technology has a number of structures that allow the technology to mediate human actions. Technology influences human actions, but the human actions in relation to the technology are also controlled, for example by institutional conditions. As a result, consequences arise that influence the relationship between man and technology [28] stress that technology transforms by appropriation: Technology as it was designed changes through the appropriation process into technology as it is used.