Assessing Implementation Fidelity of a School-Based Crisis Prevention Program with an Ex-Post-Facto Design: The NETWASS FOI Assessment System

Abstract

This research paper presents an operationalization procedure for measuring fidelity of implementation (FOI) of a school-based crisis prevention program. The implementation literature recommends that program developers specify core components of an intervention that are directly related to a program’s theory of change and need to be implemented with high FOI. This approach allows stakeholders some flexibility to adapt a program to individual circumstances yet helps assure that the intended outcomes are achieved. We trained 3,473 school staff in 98 German schools in the NETWorks Against School Shootings (NETWASS) program. Following the CORE cycle, we conceptualized 12 core components and operationalized relevant FOI dimensions of dosage, quality, adherence. and responsiveness. FOI was measured ex post facto, i.e after program implementation was completed, and separately for three distinct stages of implementation. Finally, we identified theoretical cut-offs for high fidelity on 15 measures using 91 items derived from an existing data set. Results indicate a high FOI across all schools for the dimensions of dosage and quality. Regarding responsiveness, high FOI was found for intervention compliance at t1 and program acceptance at the follow-up. Participant engagement during the trainings was measured separately and remained below our threshold. Adherence to 10 out of 12 core components was high. After training, school staff reported sufficient theoretical knowledge and were sensitized to recognize students in trouble, but actual case evaluation skills left room for improvement.The resulting FOI Assessment System requires validation by empirical research. Multi-level statistical modelling is necessary to test the hyothesized relationships between FOI per stage and outcomes, and obtain empirical validation of the hypothesized core components. Despite the methodological weaknesses of applying the CORE cycle ex post facto, it seems to be a feasible strategy to assess dimensions of FOI.

Fidelity of implementation (FOI) refers to the “extent to which intervention delivery adheres to the protocol or program model as intended by the intervention developers” (Mowbray, Holter, Teague, & Bybee, 2003). In implementation science, FOI is widely regarded as a multidimensional construct (Dane & Schneider, 1998; Durlak & DuPre, 2008; Dusenbury, Brannigan, Falco, & Hansen, 2003; Gould, Dariotis, Greenberg, & Mendelson, 2016; Gould at al., 2014). Dane and Schneider (1998) described five major dimensions of FOI which informed the theoretical basis of the NETWASS FOI assessment system: (1) adherence, which is the extent to which core activities and processes are implemented as prescribed by the program model; (2) dosage, which reflects the amount of intervention received by the participants, e.g., the number of training sessions; (3) quality, which refers to the manner and effectiveness of program delivery, e.g., overall quality of training sessions; (4) responsiveness, which indicates the extent to which participants are engaged in the program and involved in program activities; and (5) differentiation, which refers to the empirical identification of the most critical components of the program (Dane & Schneider, 1998; Durlak & DuPre, 2008; Dusenbury et al., 2003).

Although there is currently no consensus among implementation scientists on the optimum degree of FOI that is needed to achieve program effects, the majority of studies indicates that higher FOI is associated with better outcomes and should be favored over flexibility (Borrelli et al., 2005; Durlak & DuPre, 2008; Gould et al., 2016; Fagan & Mihalic, 2003). Nevertheless, to guarantee implementation compliance and sustainability across diverse settings, stakeholders must be allowed some flexibility to adjust a program to their needs and resources (August, Bloomquist, Lee, Realmuto, & Hektner, 2006). Under complex, real-world conditions, a multi-modal program might require extensive adaptations that jeopardize program effectiveness. To balance flexibility with the need for FOI, the research literature advises program developers to: a) clearly specify core components along with requirements regarding dosage and adherence in the program manual; b) provide recommendations on how to make adaptations to the setting if necessary and indicate where flexibility is allowed without compromising the intended outcome (Blase & Fixsen, 2013; Dusenbury et al., 2003; Fixsen, Naoom, Blase, Friedman, & Wallace, 2005; Mowbray et al., 2003; Wandersman et al., 2008).

Program core components are the critical mechanisms, principles, or processes of an intervention (e.g., a certain content or teaching method) that are necessary to produce the desired outcomes. Core components thus are directly related to a program’s theory of change, which defines the mechanisms by which an intervention works, and therefore need to be implemented with high FOI to guarantee program effects.

Since intervention programs are increasingly expected to be evidence-based and specify the active ingredients, „black box“-outcome studies are recognized as insufficient to establish a program’s effectiveness (Leuschner et al., 2017; Mowbray et al. 2003). While some evaluators have articulated and validated the core components of their programs (Chamberlain, 2003; Forgatch, Patterson, & Degarmo, 2005; Henggeler, Schoenwald, Liao, Letourneau, & Edwards, 2002; Webster-Stratton & Herman, 2010), few authors provide sufficient guidance about the critical features of their programs (Dane & Schneider, 1998; Durlak & DuPre, 2008; Gould et al., 2016).

The NETWorks Against School Shootings (NETWASS) Program

The NETWASS program aims to prevent severe, targeted school violence through the combination of a structured threat assessment approach based on the Virginia Student Threat Assessment Guidelines (Cornell, 2013; Cornell & Sheras, 2006) with a developmentally-informed model for early and indicated crisis prevention (Leuschner et al., 2011; 2017; Scheithauer, Leuschner, & NETWASS Research Group, 2014). Case analyses have shown that school shootings were long-term planned, targeted attacks and resulted from an individual psychosocial crisis of the perpetrators (O’Toole, 1999; Verlinden, Hersen, & Thomas, 2000; Vossekuil, Fein, Reddy, Borum, & Modzeleski, 2002; cf. Sommer, Leuschner, Fiedler, Madfis, & Scheithauer, 2020). Long before engaging in actual planning behavior, students showed warning behaviors and leaked their violent fantasies to peers or an adult (Bondü & Scheithauer, 2014; O’Toole, 1999; Newman, Fox, Roth, Mehta, & Harding, 2005; Verlinden et al., 2000). Thus, individual vulnerabilities and social strain are important indicators to be detected by school staff (Harding, Fox, & Mehta, 2002; Langman, 2009; Newman et al., 2005; Sommer, Leuschner, & Scheithauer, 2014). However, Fox and Harding (2005) found that school staff is often unaware of a student’s personal crisis because of information fragmentation and the lack of structured procedures for information exchange. A major implication from this research was the need to develop a preventive model for schools to: (1) identify crisis symptoms; (2) assess whether there was a serious threat of violence; and (3) implement case management services that would both address the student’s crisis and prevent a violent outcome.

The NETWASS prevention model consists of four steps, and follows a scientist-practitioner model: (1) In a standardized face-to-face training, all school staff is sensitized to increase awareness for students in trouble. School staff is then encouraged to report a “conspicuous” behavior to a crisis prevention appointee. (2) A crisis prevention appointee is nominated by every school after the trainings. He/she collects information about a student, and decides if more extensive case management is required. (3) If necessary, a school’s crisis prevention team (either previously established, or formed as a consequence of the NETWASS trainings) conducts a collaborative, evidence-based threat assessment, and develops an intervention plan to provide student support. (4) Cases are monitored in order to evaluate the effectiveness of the case management plan (Leuschner et al., 2011; Scheithauer et al., 2014).

By 2012, the program had been implemented in 98 schools in Germany. Evaluation data from 3,473 school staff participants were collected in a quasi-experimental comparison group-design at three measurement points (pre, post, 7-months-follow-up). Teachers displayed increased expertise on the topic, were more likely to identify a student in trouble, and improved their evaluation skills after program implementation (Leuschner et al., 2017; Scheithauer et al., 2014). Since the NETWASS program was designed to foster structural changes in schools, it does not consist of a series of training sessions. Moreover, the school-wide program implementation requires a collaborative effort between the NETWASS trainers and school staff that is executed in three stages. The need for standardization of program content and material differ at each stage, as described in Table 1.

Table 1

Standardization of Program Materials at Three Stages of Implementation

| Stage and Facilitators | Program Material and Methods |

| Stage 1: Trainings, facilitated by NETWASS trainers | Level of Standardization: High |

| •Standardized duration: 2 hours (all school staff), 16 hours (crisis prevention team) | |

| •Comprehensive manual for trainers including didactical guidelines and a detailed training schedule | |

| •Powerpoint presentation with standardized content: Information on theoretical background of school shootings, research findings on risk factors and crisis indicators, introduction to preventive approaches | |

| •Introduction to the prevention model including templates and basic decision criteria for case report | |

| •Practice using decision criteria with 6 fictional case vignettes | |

| Additional content for crisis prevention team members: | |

| •Detailed introduction to all 4 steps of the prevention model including guidelines for case management and monitoring | |

| •Detailed introduction to threat assessment criteria, crisis indicators and guidelines for initiating student support measures | |

| •Simulation of a best-practice case assessment based on a case example (fictional or as suggested by the participants) | |

| •Continuous feedback from the trainers | |

| •Guided group discussion on implementation challenges and development of an intervention plan for individual schools | |

| Outcome: Sensitization of all school staff, understanding of the NETWASS prevention model, training of the crisis prevention team, intervention compliance | |

| Stage 2: Implementation of structures and case | Level of Standardization: Low |

| identification, facilitated by all school staff | •Occasional telephone support by the research team |

| •General recommendations on implementation (composition of the team, enhancing intervention compliance of colleagues) | |

| •Templates for case reports | |

| Outcome: Establishment of a crisis prevention team, nomination of a crisis prevention appointee, development of skillful case identification and reporting | |

| Stage 3: Case management, facilitated by the crisis | Level of Standardization: Moderate |

| prevention teams | •Occasional telephone support by the research team |

| •Recommendations regarding session intervals, duration, and collaboration with professional network on-site and outside the school | |

| •Structured case management protocol including threat assessment criteria | |

| •Templates for case management | |

| Outcome: Development of interventions for students, effective threat assessment to avert violent outcomes, sustainable cooperation with a professional network |

The CORE Process to Study FOI in an Ex-Post-Facto-Design

Although the importance of assessing FOI of implementation has been vigorously emphasized in the literature, most evaluation papers do not report FOI measures (Slaughter, Hill, & Snelgrove-Clarke, 2015), or only include a primary set of data related to selected dimensions of FOI, e.g., dosage or quality (Gould et al., 2014). A systematic review of programs implementing mindfulness techniques in schools conducted by Gould et al. (2016) revealed that only 48 out of 312 studies reported FOI measures, and fewer than 20% measured aspects of FOI beyond dosage and participant responsiveness. Fewer than 10% of the studies identified outlined program core components or articulated a theory of change.

The CORE process (Gould et al., 2014) is a cycle to conceptualize and measure core components based on available program material (e.g., structured manual, program theory and goals). The primary goal of the procedure is to verify relations between the adherence of hypothesized core components and outcomes, thereby enhancing program theory by accurately indicating which program components are related to program effects. The process consists of four sequential steps, which are repeated in a cycle until the core components are empirically validated: (1) Conceptualize core components; (2) Operationalize and measure; (3) Run analyses and review findings; (4) Enhance and refine the program theory.

With an increased recognition for FOI in the implementation science literature, and findings that many seemingly effective programs do not produce good results when implemented on a routine basis or on a larger scale (Dariotis, Bumbarger, Duncan, & Greenberg, 2008; Domitrovich et al., 2008; Dusenbury, Brannigan, Hansen, Walsh, & Falco, 2005; Gottfredson D.C. & Gottfredson, 2002; Payne, Gottfredson, & Gottfredson, 2006) a question emerges: Can a framework, such as the CORE process, be applied to assess FOI ex post facto, i.e., after a program is implemented and an on-site monitoring is no longer feasible? Whereas an ex-post-facto assessment of FOI should not differ largely from a traditional a priori strategy in the steps required for conceptualization and empirical validation, the operationalization procedure will be largely different. However, if feasible, such an approach would allow program developers to re-evaluate effectiveness results and generate new hypotheses about effective mechanisms before disseminating or upscaling an innovation, and ultimately refine the program model.

The goal of this paper is to discuss whether the CORE cycle as suggested by Gould et al. (2014) can be used for an ex-post-facto assessment of FOI. While we did include several items to obtain information on process evaluation in our questionnaires, we did not explicitly aim to assess FOI at the beginning of our evaluation study. First, we will test whether it is feasible to identify hypothesized core components of the NETWASS intervention based on program material. We will then investigate whether we can create measures from our available data set, based on the questionnaires administered to school staff during the evaluation period. Consequently, this paper will present a comprehensive and detailed outline of all measures used and whether they were appropriate to assess FOI ex post facto.

Method

We followed the four-step procedure as suggested by Gould et al. (2014) to identify core components of the NETWASS program ex post facto, and made adaptations which were necessary to incorporate specific design features, such as the three measurement points representing different stages of implementation. In detail, our procedure was as follows: (1) Review all available material regarding information on core components. (2) Make a list of conceptualized core components and the formal program theory. (3) Review available data for relevant scales or items. (4) Develop measures for each stage of implementation. (5) Establish theoretical or empirical benchmarks for high FOI. (6) Aggregate scale scores across the schools and review findings on degree of FOI.

Materials Used to Conceptualize Core Components

We included all available sources of information to obtain a multi-method data pool and a broad perspective on program implementation:

Information from the manual. As a starting point, we examined the structured program manual for schools. The manual specified all program goals, activities and the sequence in which they were to be delivered. Furthermore, we obtained information from the structured trainer manual which provided additional information about training methodology, a detailed time-table, and learning goals.

Feedback from the trainers. To obtain a meta-perspective of the facilitating trainers, which were either members of our research team or multipliers with a background in school psychology or legal crime prevention, we engaged in regular exchange sessions by telephone to learn about their experiences with the training manual.

Discussions in our research team and conceptual papers. We conducted a series of documented discussions within our research team about program goals, assumed mechanisms, and the rationale behind the inclusion of specific activities and practices. We also reviewed a paper written by the program developers when applying for program funding. Following this, we presented and discussed our hypothesized core components with colleagues from Freie Universität Berlin who are engaged in other prevention projects, but were not involved in the NETWASS study.

Evaluation results and findings from follow-up interviews with schools. We received input from three schools that were part of a pilot trial and obtained qualitative data on implementation success and challenges from telephone-supported process monitoring from all schools. Additionally, we conducted guideline-based follow-up interviews in which the crisis prevention teams reflected on their experiences made with our case management procedure. Finally, we drew on quantitative results from the effectiveness study to contextualize and interpret the findings from our FOI assessment system.

Stage-Specific Conceptualization of Core Components and Program Theory

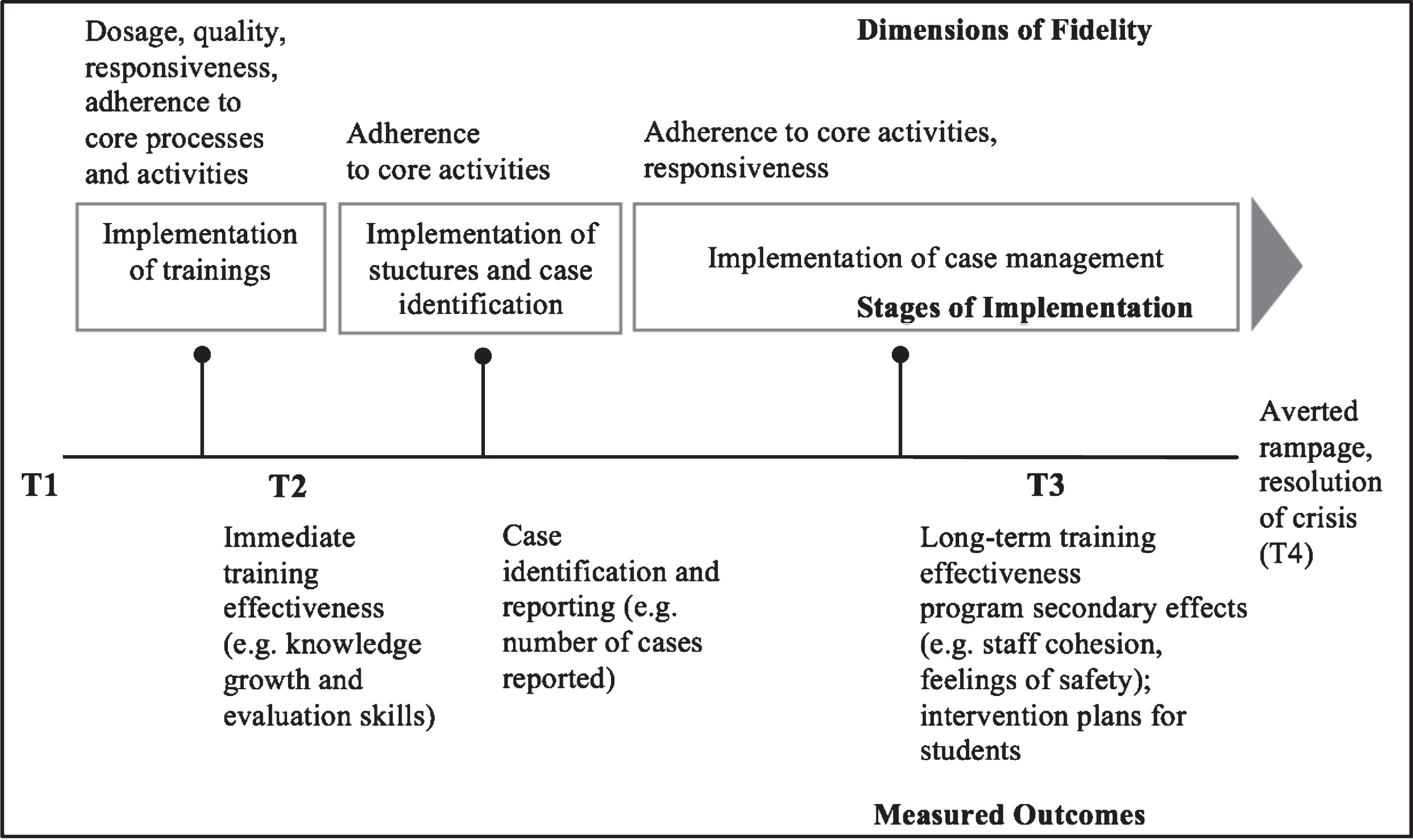

Since there is a potential for non-adherence to the program manual at any given stage of implementation, we conceptualized NETWASS core components for each of the three stages. Depending on the degree of standardization, a failure to implement the core processes or activities relevant for a specific stage, or altering the dosage or quality of the initial training sessions, could reduce program effectiveness. This also implies that FOI should be measured separately for each stage. Figure 1 illustrates stages of implementation and FOI dimensions.

Figure 1.

Evaluation design with stages of implementation and FOI dimensions.

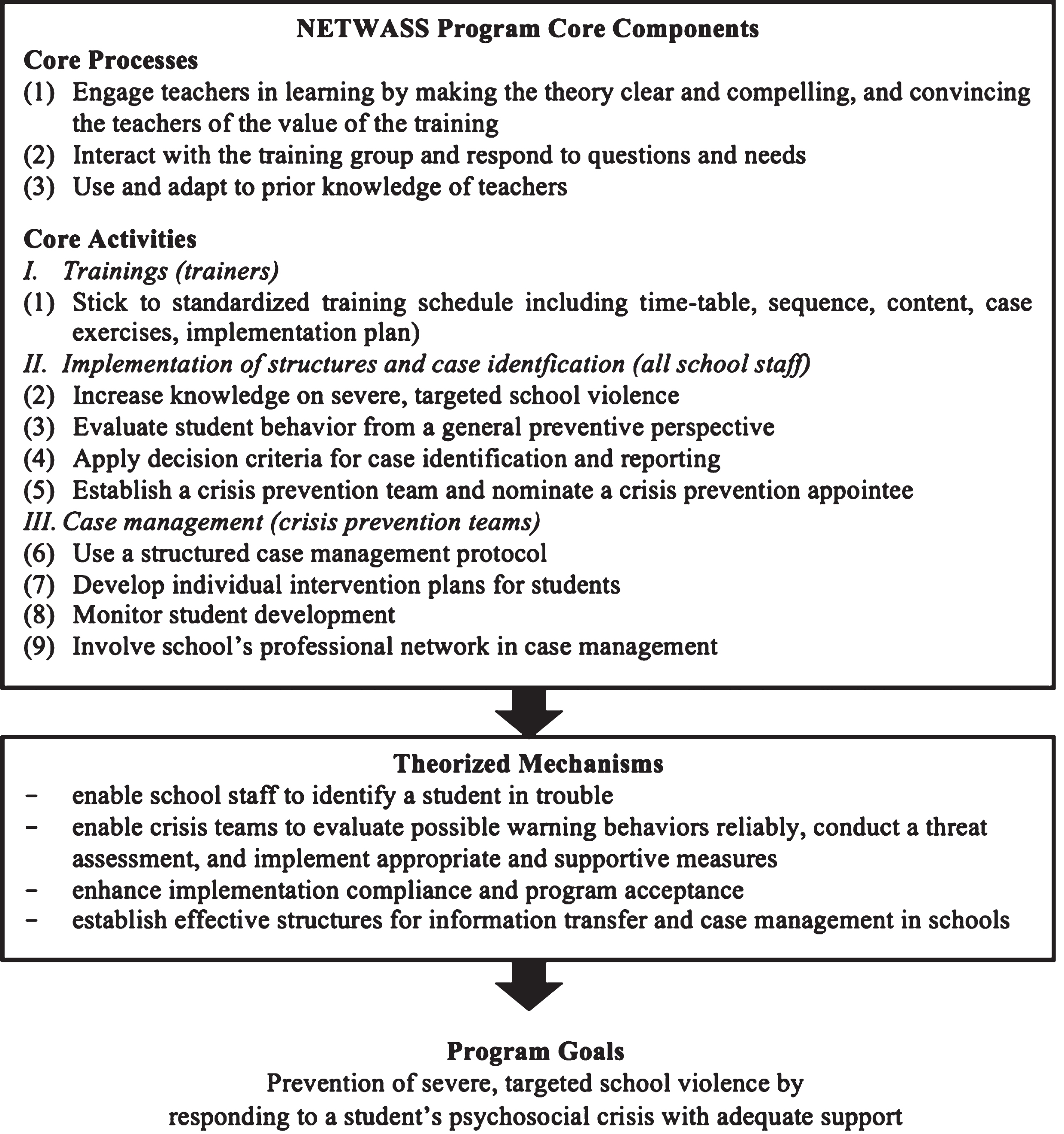

After an in-depth review of the NETWASS materials, and a series of discussions within our research team on the underlying theory of change, we defined our core components more precisely and developed a more refined logic model that decomposed the program into its hypothesized active ingredients, as presented in Figure 2. According to Gould et al. (2014), we classified program core components into two types: Program core processes and program core activities. Program core processes refer to the manner in which the implementers engage with the teachers and the way in which the program material is taught to school personnel (e.g., training style, interaction behavior). While processes may be difficult to measure, they are critical to the program’s success because they influence the acceptance and overall compliance with the intervention.

Figure 2.

NETWASS program theory and core components.

Core activities are the practices as recommended in the curriculum. They are implemented and measured at all three stages of implementation: Trainings, implementation of structures and case identification, and case management. Since all core activities that should be implemented during the trainings are standardized by the material, we conceptualized only one core component for the first stage. The core activities for the stage of structural implementation and case identification were intended to cover all preconditions that are necessary for the school to recognize and exchange information when a student is experiencing a crisis. Core activities implemented at the third stage ensure that crisis prevention teams recognize early crisis indicators, conduct a structured case management that includes an evidence-based threat assessment, and develop student support measures based on a student’s specific risk and protection factors.

Measures to Assess FOI of Trainings

Our FOI assessment system, including a list of all data sources, is outlined in Table 2. The fifth dimension “differentiation” is incomplete, because it requires empirical evidence of the relations between specific components and program outcomes. Dosage of the initial trainings was determined by the training schedule and no additional measure was needed. Training participation was estimated by the pre-training questionnaire response rate. In order to protect participant confidentiality, there were no attendance lists, so we calculated a training participation quotient by dividing the number of pre-training questionnaires by the total number of teachers per school. In all cases, a pre-training questionnaire was followed by training participation. However, in some cases, a teacher did not participate in the study yet attended the training afterwards. Consequently, this assessment can underestimate the number of participants.

Table 2

Measures to Assess FOI Dimensions and Core Components

| FOI Dimension | Data Source | Assessment |

| Dosage | Participation rate | Evaluators (t1) |

| Dosage according to schedule | ||

| Quality | Training evaluation form | All school staff (T) |

| Responsiveness | Participant engagement scale | All school staff (T) |

| Intervention compliance scale | All school staff (t2) | |

| Program usefulness scale | Crisis prevention teams (t3) | |

| Program acceptance scale | All school staff (t3) | |

| Adherence (Processes) | ||

| (1) Engage teachers in learning | Training delivery scale | All school staff (T) |

| (2) Interact with the training group and respond to questions | ||

| (3) Use and adapt to prior knowledge | ||

| Adherence (Activities) | ||

| Stage 1: Trainings | ||

| (1) Stick to standardized training schedule | Trainer adherence scale, Trainer adaptations scale | Trainers (t3) |

| Stage 2: Implementation of Structures+Case Identification | ||

| (2) Increase knowledge on severe, targeted school violence | Knowledge test | All school staff (t2) |

| (3) Evaluate student behavior from a general preventive perspective | Attitudes towards prevention scale, Responses to student problems scale | All school staff (t2) |

| (4) Apply decision criteria for case identification and reporting | Evaluation skills scale | All school staff (t2) |

| (5) Establish a crisis prevention team and nominate a crisis prevention appointee | Observational rating | Evaluators (t3) |

| Stage 3: Case management | ||

| (6) Use a structured case management protocol | Adherence to protocol scale | Crisis prevention teams (t3) |

| (7) Develop individual intervention plans for students | ||

| (8) Monitor student development | ||

| (9) Involve school’s professional network in case management |

Note: t1 = pre-training, t2 = post-training (2 weeks after the trainings), t3 = follow-up (7 months after the trainings), T = end of trainings on the same day.

Trainer adherence scale. To obtain data on trainer adherence to core activities specified in the training manual, we had to rely on retrospective self-report from the trainers. Every trainer completed a self-report questionnaire at the end of the implementation period (seven-month follow-up) and assessed his or her adherence across all training sessions (ranging from 5–10). Instructors rated the extent to which they stuck to the training schedule or made adaptations. Five items of the Trainer Adherence Scale (Cronbach’s alpha = .93) covered adherence to the time-table, content, conducting case study exercises, and preparing an implementation plan with the training groups, e.g., “When delivering the training sessions, I usually discussed the topics as scheduled in the manual.”

Trainer adaptations scale. Additionally, four more items were rated on the Trainer Adaptations Scale (Cronbach’s alpha = .70), indicating adaptations made by the trainer, e.g., “When delivering the training sessions, I occasionally provided additional content or discussed issues that were not included in the manual.”

Training delivery scale. Adherence to core processes during the trainings was assessed by participants (i.e. all school staff) at the end of the training sessions. The Training Delivery Scale (Cronbach’s alpha = .90) included five items on all three core processes, e.g., “The trainer communicated the course content clearly and effectively to the participants.”

Training evaluation form. Participants (all school staff) filled out a four-item Training Evaluation Form (Cronbach’s alpha = .95) to assess the quality of trainings. Items covered overall training quality, benefit, participant interest level, and usefulness of the trainings, e.g., “Overall, how would you rate the training class in terms of usefulness?”

Participant engagement scale. To evaluate responsiveness to the trainings, all school staff answered the Participant Engagement Scale (5 items, Cronbach’s alpha = .92) at the end of the trainings, and marked their interest level in the topic, as promoted by the trainings, their motivation to use the new knowledge in day-to-day work, and the extent to which trainers were able to communicate relevance of the topic for an individual teacher’s work, e.g., “I feel encouraged to incorporate the newly learned skills into my working routine.”

Intervention compliance scale. In addition, a five-item Intervention Compliance Scale (Cronbach’s alpha = .75) was rated by all school staff members two weeks after the trainings. This scale had items concerning their attitudes towards the program and readiness to collaborate with colleagues, as well as concerns about increased work load, e.g., “I am willing to take on additional tasks and extra work to implement the program at our school.” We also asked crisis prevention teams to report their attitudes towards the program, and perceptions about its usefulness in daily work at the seven-month follow-up.

Measures to Assess Implementation of Structures and Case Identification

A set of core activities was supposed to be implemented by school staff on a school-wide basis in stage two (following the trainings). To assess schools’ adherence to manual-based recommendations on structural changes, research staff completed an observational rating. Based on process monitoring (mainly by telephone interviews), all research staff reported on each school’s implementation success, specifically whether a school had established a crisis prevention team and nominated a crisis prevention appointee.

Three specific instruments were created to evaluate the remaining core activities for stage two. Operationalization of these core activities was most difficult because they were not concrete structural changes or well-defined behaviors by a specific person. Additionally, the context and time-span of these core activities was less well-defined, since stage two started directly after training and lasted until the final follow-up survey. We also aimed to differentiate between a core activity as defined by the program manual (e.g., apply decision criteria for reporting cases) and an outcome (e.g., number of cases reported), with the latter being an effect of a properly implemented core activity. However, we had to put a major focus on the stage two core activities because they were an immediate consequence of training core components and outcomes. What is more, their full implementation was essential for implementing stage three core activities in case management. To take a step forward, we outlined the specific skills and cognitions of trained school staff that would reflect most accurately whether a core activity had been implemented properly.

Knowledge test. To assess the implementation of the core activity concerned with cognitive expertise, we evaluated school staff’s knowledge on the topic two weeks after training. Every teacher completed an 11-item knowledge test on the topic of severe targeted school violence, e.g., school staff should disagree with the following statement: “Checklists are the best way to identify potential perpetrators.” A total score was calculated from the number of correct answers. To determine whether a school staff member had made an attitude shift towards a more general preventive perspective, we administered two scales:

Attitudes towards prevention scale. School staff first indicated to what extent they believed certain school safety measures were effective and helpful. Three items (Cronbach’s alpha = .64) covered approaches specific to the NETWASS prevention model, such as information on risk factors and warning behavior, involving school psychologists or school social workers to ensure school safety, and providing individual student support and case monitoring.

Response to student problems scale. Teachers rated their general tendency to respond to student problems on a seven-item scale (Cronbach’s alpha = .77). Items included behavioral reactions that either increased information exchange in the school (e.g., sharing concerns with a colleague, informing the homeroom teacher or school principal), or offered direct student support (e.g., talking to the student or consulting a school psychologist). According to the NETWASS crisis prevention model, these teacher behaviors are assumed to be helpful to preventing an individual crisis, and promoting an open and trustful school climate.

Evaluation skills scale. Because direct monitoring of how a teacher applied the NETWASS criteria for case identification and reporting was not feasible, we obtained data about their decision-making with case vignettes. All school staff evaluated four case examples two weeks after the training. These case scenarios represented student risk factor patterns and warning behaviors. Four items per case covered the NETWASS decision criteria for reporting a case (one item per criterion). An evaluation skills score was computed by averaging the 16 items (McDonald’s ωt = 0.83). The ability of a single teacher to evaluate a case is an essential core activity at stage two of program implementation because it provides the necessary condition for triggering advanced case management.

Measures to Assess FOI of Case Management

A major challenge was to assess how the crisis prevention teams implemented core activities for stage three. It was not feasible to have observers attend or record case management sessions because cases arise sporadically and require immediate action. Furthermore, school teams did not want researchers to observe confidential discussions about a student’s family background, medical issues, or other private concerns. Therefore, we relied on written documents from these sessions.

Adherence to protocol scale. Additionally, we created a six-item self-report scale that covered the main standards that should be followed when discussing student cases. All crisis prevention team members answered the adherence to protocol scale (Cronbach’s alpha = .87) at the seven-month follow-up. Items included ratings on the extent to which information about a student was exchanged and documented in a structured procedure (“Our crisis prevention team adhered to the case management protocol when collecting and evaluating information about a student in trouble.”), whether the team followed guidelines on how to provide adequate support for a student, and whether the school’s professional network was involved in case management.

Program acceptance scale. To operationalize school-wide responsiveness to the intervention, we created a 9-item Program Acceptance Scale (Cronbach’s alpha = .84) from items administered to all school staff at the 7-month follow-up. All school staff rated their level of satisfaction with the management of cases conducted by the school’s crisis prevention team, perceived accessibility and reliability of the crisis prevention appointee, the effect of the implemented structures on institutional information exchange, as well as overall program acceptance.

Program usefulness scale. The 8-item Program usefulness scale (Cronbach’s alpha = .81) provided an additional long-term-perspective on structural implementation and responsiveness, and reflected the experiences of crisis prevention team members with structured case management according to the NETWASS principles, e.g., “The crisis prevention team is helpful in managing student cases and violence-related problem behavior.”

Table 2 gives an overview of the used instruments and measures.

Establishing FOI Benchmarks and Descriptive Analysis

We defined cut-offs for all instruments that represented the minimum standards we considered necessary for program effectiveness. Specifically, we defined numerical thresholds for high FOI, based on either an empirical cutoff (mostly the mean value), or based on theoretical standards as discussed by the team, e.g., a minimum percentage of school staff that should have participated in the NETWASS trainings to achieve school-wide sensitization for the topic, or a minimum degree of responsiveness and compliance we considered to be a necessary condition for sustainable program implementation. In seven cases the theoretically defined threshold was the same as the calculated statistical mean value of the respective aggregated scale score. To assess the degree of FOI within each school, we first calculated scale scores for individual teachers, and then aggregated individual scores to the school level.

Results

Results on the Degree of FOI at all Stages of Implementation

We collected FOI data from 15 measures consisting of 91 items. Our measurement system included an observational rating, a calculated training-participation quotient, a knowledge test, one rating scale measuring evaluation skills based on case vignettes, and eleven rating scales reporting general attitudes, as well as attitudes towards the program, and self-reported past behavior. Table 3 displays the results of FOI across the schools, as well as the cut-off values for high FOI.

Table 3

Overview of FOI Measures, Theoretical Cut-Offs and Descriptives

| FOI Dimension | Data Source | Measure | Criteria for High FOI | Average across Schools | Range across Schools |

| Dosage | Response Rate | Percentage of school staff who completed t1 | 60% | N = 73 | 10,89–100% |

| As scheduled | Number of lessons à 45 min. delivered according to implementation condition | - | - | 4–16 | |

| Quality | Training Evaluation Form | Mean of 4 items: 1 = very poor to 7 = exceptional (Cronbach’s alpha = .94) | 5 –very good | 5.32 | 3.77–7.00 |

| Responsiveness | Participant Engagement Scale | Mean of 5 items: 1 = not at all to 5 = extremely (Cronbach’s alpha = .92) | 4 –very engaged | 3.72 | 2.62–5.00 |

| Intervention Compliance Scale | Mean of 6 items: 1 = low to 4 = high (Cronbach’s alpha = .75) | 3 –moderate | 3.19 | 2.73–4.00 | |

| Program Usefulness Scale | Mean of 8 items: 1 = not useful to 4 = very useful (Cronbach’s alpha = .81) | 3 –useful | 3.11 | 2.25–3.84 | |

| Program Acceptance Scale | Mean of 9 items: 1 = very low to 4 = very high (Cronbach’s alpha = .84) | 3 –high | 3.34 | 2.68–3.94 | |

| Adherence: Core Processes | Training Delivery Scale | Mean of 5 items: 1 = not at all effective to 5 = very effective (Cronbach’s alpha = .90) | 4 –nearly all processes delivered effectively | 4.02 | 2.97–5.00 |

| Adherence: Core Activities | Trainer Adherence Scale | Mean of 5 Items: 1 = low adherence to 5 very high adherence (Cronbach’s alpha = .93) | 4 –high adherence | 3.11 | 1.80–4.80 |

| Trainer Adaptations Scale | Mean of 4 Items 1 = never to 5 = always (Cronbach’s alpha = .70) | <2 –rarely made adaptations | 1.85 | 1.00–3.50 | |

| Knowledge on STSV | Knowledge test scale | Mean of 11 Items: 0 = poor to 1 = excellent | Mean = .65 | N = 86 | .55–1.00 |

| Preventive Perspective | Attitudes towards prevention | Mean of 3 items: 1 = not at all effective to 4 = highly effective (Cronbach’s alpha = .64) | 3 –moderately effective | 3.42 | 3.12–4.00 |

| Response to Student Problems Scale | Mean of 7 items: 1 = never to 4 = always (Cronbach’s alpha = .77) | 3 –often | 2.84 | 2.33–3.67 | |

| Implementation of structures | Observational Rating | 0 = not implemented, 1 = implemented | 1 –implemented | N = 88 | - |

| Case Identification | Evaluation Skills Scale | Mean of 16 items based on four case scenarios: 1 = very poor to 5 = excellent (McDonald’s ωt=.83) | 4 –very good | 3.58 | 3.05–4.25 |

| Structured Case Management Protocol | Adherence to Protocol Scale | Mean of 6 items: 1 = not at all true to 4 = completely true (Cronbach’s alpha = .87) | 3 –adhered to protocol most of the time | 2.91 | 1.50–4.00 |

In 73 schools at least 60% of all school staff participated in the trainings. Sixty-three schools met the criterion of 75% staff participation. There were 14 schools which showed a participation rate below 10%. School staff’s mean rating for training quality was 5.32. Self-reported participant engagement during the trainings was rated 3.72, and intervention compliance after the trainings was rated 3.19 by school staff. Mean ratings for program usefulness and program acceptance were 3.11, and 3.34, respectively. The trainers’ self-reported mean ratings for adherence to core processes were 4.02, and 3.11 for adherence to core activities. An above-average knowledge level (mean > .65) was found for 86 schools, and 88 schools implemented the recommended structures with FOI (i.e. established a crisis prevention team and nominated a crisis prevention appointee).

School staff’s mean ratings for the effectiveness of a preventive perspective targeting student support were 3.42, and 2.84 for the general tendency to provide student support and promote a trustful school climate. School staff’s mean adherence to the recommended decision criteria for case identification and reporting was 3.58. The crisis prevention team members’ self-reported mean rating for adherence to the case management protocol was 2.91.

Discussion

The goal of this paper was to test whether the CORE process (Gould et al., 2014) can be used as an ex post facto strategy to assess FOI of the NETWASS preventive intervention. This included three major steps: 1) Generating a theoretical conceptualization of NETWASS core components based on available program material; 2) Operationalizing the identified core components by creating measures from an available data set; and 3) Establishing cut offs or threshold values which indicate a high degree of FOI.

Based on our thresholds, trainings were implemented with high FOI for the dimensions of quality, responsiveness, and trainer adherence to core processes. This indicates that the trainers managed to interact with the training group, adopted their teaching to the participants’ skill level effectively, and intervention compliance was generally high after the trainings. Although we know from our effectiveness study that school-wide sensitization significantly increased after the trainings (Leuschner et al., 2017), participant engagement remained slightly below our threshold for high FOI (“I feel encouraged to incorporate the newly learned skills into my working routine.”). Our interview data reveal that this predominantly applied to school staff as opposed to crisis prevention teams. Perhaps the school staff have less experience with crisis situations crisis prevention team members, or do not regard implementation of the program as central to their role as teachers. Additionally, they only received a basic two-hour training. While our effectiveness results indicate that trainings altered school staff’s typical responses to student problem behavior and enhanced their evaluation skills (Leuschner et al., 2017), their skill level after the trainings remained slightly below our theoretical threshold for what we consider sufficient to fully adhere to our decision criteria for case identification and reporting. Further examination will show if our threshold has been too strict regarding participants’ baseline scores, or if we can improve trainings to enhance effects.

Adherence to case management core activities was measured by crisis prevention team members’ self-reports, with values being marginally below the threshold for high FOI. This indicates, that crisis prevention team members ‘adhered to the case management protocol most of the time’. Case reports provided by the schools for further analyses will reveal which components were not implemented. On the basis of our qualitative interviews conducted at the follow-up, schools did not consistently monitor student behavior after an initial case management and often lacked continuous support by their professional network.

Limitations and Lessons Learned

In general, adapting the CORE process as an ex post facto strategy was a viable way to create our FOI assessment system. However, an ex post facto-study is a relatively weak design that cannot claim the power of an experimental design. The process of defining a program component as a core activity or process was not only influenced by theoretical assumptions, but by practical experiences in the school setting. In addition, we will have to focus on addressing the methodological issues related to the operationalization of core components across all stages of implementation. Although the majority of crisis prevention teams reported nearly equal amounts of time they dedicated to a single case management session (i.e. 45–60 minutes), the total amount of sessions ranged between one and 20 per school. This means that some schools identified more students in crisis than other schools. First and foremost, this implies that the schools generally adhered to manual recommendations (“Conduct a case management whenever a new case comes up”; “Repeat case management when a case requires re-evaluation”). However, when drawing conclusions from our FOI assessment, we have to keep in mind that schools may differ in their standards for deciding when a student qualifies for intervention. In some schools staff may be more or less willing to contact the team for help.

The scales of our FOI assessment system show a good to excellent internal consistency, and appear to be reliable measures to operationalize the NETWASS core components. However, we faced issues linked to the objectivity of some of our FOI data. Since we regrettably were not allowed to videotape trainings, as well as case management sessions, data on trainer and crisis prevention team adherence to core components is based on retrospective self-reports. Reflecting on our strategy to measure core activities at stage two, we have to examine whether the chosen knowledge and evaluation skills scores validly represent a teacher’s internal decision-making process on reporting a case. An optimal but impractical method would have been to place an observer in every school who precisely records the number of cases showing conspicuous warning behavior (i.e. should have been identified by school staff) and conduct in-depth interviews with all teachers afterwards about their decision criteria and knowledge used. A more practical option is to examine inter-rater reliability across teachers.

Surprisingly, trainers’ self-reports revealed only medium adherence to the relevant core activities during the trainings (i.e. sequence, content and timetable). Since trainers’ ratings of the control item indicated that trainers “rarely made adaptations” to the standardized training schedule, we infer that trainers were overly critical.

Conclusion and Outlook

To summarize, despite being a careful and lengthy decision-making process, we found it feasible to develop a conceptual framework of NETWASS core components based on our assumptions on critical mechanisms and processes and our program material. What is more, applying an ex post facto strategy, we were able to gain a deeper understanding of the overall amount of adaptations the schools made to our program during the implementation period. The definition of the NETWASS core components therefore was driven by the primary goal of refining the program theory as described in the manual, as well as by a practical need. The feedback we received from the participating schools supported the general recommendations we can find in implementation science literature: Practitioners must be equipped with clear guidelines on which components of a program need to be implemented with strict adherence to the manual, and when flexibility is permissible.

Despite the methodological shortcomings, which may cause validity problems and reduce the power of later analyses, the paper demonstrated the development of a multi-informant, multi-dimensional and stage-sensitive FOI assessment system from an available data set. In defining the NETWASS program core components and –though ex post facto –report FOI data, we follow the recommendations of implementation scientists. The knowledge gained from conceptualizing the NETWASS core components will be useful for dissemination purposes, as well as forms the basis for the following step of validating our core components statistically and modelling relations with outcomes.

Bio Sketches

Nora Fiedler is a psychologist working as a research associate at Freie Universität Berlin, Unit “Developmental Science and Applied Developmental Psychology”. Her research interests are violence research, crisis prevention in schools, and early threat assessment of violence.

Vincenz Leuschner is professor of Criminology and Sociology at the Berlin School of Economics and Law, Department Police and Security Management. From 2009 to 2016 he was research assistant at Freie Universität Berlin. His research interests are violence research, sociology of social problems, victimology, developmental criminology, crime prevention and security research.

Friederike Sommer is a psychologist working as a research associate at the Berlin School of Economics and Law, Department Police and Security Management. She was part of the NETWASS research team, working from 2010 to 2017 at Freie Universität Berlin. Her research interests are violence research, criminology, social and emotional dynamics within developmental processes and clinical psychology.

Dewey Cornell holds the Virgil Ward Chair in Education in the School of Education and Human Development at the University of Virginia in the United States of America. His primary research interests include school safety and climate assessment, youth violence prevention, and the development of school threat assessment.

Herbert Scheithauer is Professor for Developmental and Clinical Psychology at Freie Universität Berlin, Germany, and Head of the Unit “Developmental Science and Applied Developmental Psychology”. His research interests are bullying, cyberbullying, and the development and evaluation of preventive interventions.

Acknowledgments

The NETWASS Project was funded by the Federal Ministry of Education and Research (BMBF), Germany (13N10689). We thank our cooperating partners and participating schools for taking part in our trainings and evaluation study.

References

1 | August, G. J. , Bloomquist, M. L. , Lee, S. S. , Realmuto, G. M. , & Hektner, J. M. ((2006) ). Can evidence-based prevention programs be sustained in community practice settings? The Early Risers’ advanced-stage effectiveness trial. Prevention Science, 7: , 151–165. doi: 10.1007/s11121-005-0024-z |

2 | Blase, K. , & Fixsen, D. ((2013) ). Core intervention components: Identifying and operationalizing what makes programs work. Washington: US Department of Health and Human Services. |

3 | Bondü, R. , & Scheithauer, H. ((2014) ). Leaking and death-threats by students: A study in German schools. School Psychology International, 35: , 592–608. doi: 10.1177/0143034314552346 |

4 | Borrelli, B. , Sepinwall, D. , Ernst, D. , Bellg, A. J. , Czajkowski, S. , Breger, R. ,... Orwig, D. ((2005) ). A new tool to assess treatment fidelity and evaluation of treatment fidelity across 10 years of health behavior research. Journal of Consulting and Clinical Psychology, 73: , 852–860. doi: 10.1037/0022-006x.73.5.852 |

5 | Chamberlain, P. ((2003) ). The Oregon multidimensional treatment foster care model: Features, outcomes, and progress in dissemination. Cognitive and Behavioral Practice, 10: , 303–312. doi: 10.1016/S1077-7229(03)80048-2 |

6 | Cornell, D. ((2013) ). The Virginia Student Threat Assessment guidelines:Anempirically supported violence prevention strategy. In N. Böckler, T. Seeger, P. Sitzer & W. Heitmeyer (Eds.), School shootings: International research, case studies, and concepts for prevention (pp. 379-400). New York, NY: Springer New York. |

7 | Cornell, D. G. , & Sheras, P. L. ((2006) ). Guidelines for responding to student threats of violence. Longmont, CO: Sopris West Educational Services. |

8 | Dane, A. V. , & Schneider, B. H. ((1998) ). Program integrity in primary and early secondary prevention: Are implementation effects out of control?. Clinical Psychology Review, 18: , 23–45. doi: 10.1016/S0272-7358(97)00043-3 |

9 | Dariotis, J. K. , Bumbarger, B. K. , Duncan, L. G. , & Greenberg, M. T. ((2008) ). How do implementation efforts relate to program adherence? Examining the role of organizational, implementer, and program factors. Journal of Community Psychology, 36: , 744–760. doi: 10.1002/jcop.20255 |

10 | Domitrovich, C. E. , Bradshaw, C. P. , Poduska, J. M. , Hoagwood, K. , Buckley, J. A. , Olin, S. , & Ialongo, N. S. ((2008) ). Maximizing the implementation quality of evidence-based preventive interventions in schools: A conceptual framework. Advances in School Mental Health Promotion, 1: , 6–28. doi: 10.1080/1754730x.2008.9715730 |

11 | Durlak, J. A. , & DuPre, E. P. ((2008) ). Implementation matters: A review of research on the influence of implementation on program outcomes and the factors affecting implementation. American Journal of Community Psychology, 41: , 327–350. doi: 10.1007/s10464-008-9165-0 |

12 | Dusenbury, L. , Brannigan, R. , Falco, M. , & Hansen, W. B. ((2003) ). A review of research on fidelity of implementation: Implications for drug abuse prevention in school settings. Health Education Research, 18: , 237–256. doi: 10.1093/her/18.2.237 |

13 | Dusenbury, L. , Brannigan, R. , Hansen, W. B. , Walsh, J. , & Falco, M. ((2005) ). Quality of implementation: Developing measures crucial to understanding the diffusion of preventive interventions. Health Education Research, 20: , 308–313. doi: 10.1093/her/cyg134 |

14 | Fagan, A. A. , & Mihalic, S. ((2003) ). Strategies for enhancing the adoption of school-based prevention programs. Lessons learned from the Blueprints for Violence Prevention replications of the Life Skills Training Program. Journal of Community Psychology 31: , 235–254. doi: 10.1002/jcop.10045 |

15 | Fixsen, D. L. , Naoom, S. F. , Blase, K. A. , & Friedman, R. M. ((2005) ). Implementation research: A synthesis of the literature. Tampa, FL: University of South Florida, Louis de la Parte Florida Mental Health Institute, The National Implementation Research Network. |

16 | Forgatch, M. S. , Patterson, G. R. , & DeGarmo, D. S. ((2005) ). Evaluating fidelity: Predictive validity for a measure of competent adherence to the Oregon model of parent management training. Behavior Therapy, 36: , 3–13. doi: 10.1016/S0005-7894(05)80049-8 |

17 | Fox, C. , & Harding, D. J. ((2005) ). School shootings as organizational deviance. Sociology of Education, 78: , 69–97. doi: 10.1177/003804070507800104 |

18 | Gottfredson, D. C. , & Gottfredson, G. D. ((2002) ). Quality of school-based prevention programs: Results from a national survey. Journal of Research in Crime and Delinquency, 39: , 3–35. doi: 10.1177/002242780203900101 |

19 | Gould, L. F. , Dariotis, J. K. , Greenberg, M. T. , & Mendelson, T. ((2016) ). Assessing fidelity of implementation (FOI) for school-based mindfulness and yoga interventions: A systematic review. Mindfulness, 7: , 5–33. doi: 10.1007/s12671-015-0395-6 |

20 | Gould, L. F. , Mendelson, T. , Dariotis, J. K. , Ancona, M. , Smith, A. S. R. , Gonzalez, A. A. , & Greenberg, M. T. ((2014) ). Assessing fidelity of core components in a mindfulness and yoga intervention for urban youth: Applying the CORE Process. New Directions for Youth Development, 2014: (142), 59–81. doi: 10.1002/yd.20097 |

21 | Harding, D. J. , Fox, C. , & Mehta, J. D. ((2002) ). Studying rare events through qualitative case studies. Sociological Methods & Research, 31: , 174–217. doi: 10.1177/0049124102031002003 |

22 | Henggeler, S. W. , Schoenwald, S. K. , Liao, J. G. , Letourneau, E. J. , & Edwards, D. L. ((2002) ). Transporting efficacious treatments to field settings: The link between supervisory practices and therapist fidelity in MST programs. Journal of Clinical Child & Adolescent Psychology, 31: , 155–167. doi: 10.1207/s15374424jccp3102_02 |

23 | Langman, P. F. ((2009) ). Why kids kill: Inside the minds of school shooters. New York, NY: Palgrave, MacMillan. |

24 | Leuschner, V. , Bondü, R. , Schroer-Hippel, M. , Panno, J. , Neumetzler, K. , Fisch, S. ,... Scheithauer, H. ((2011) ). Prevention of homicidal violence in schools in Germany: The Berlin Leaking Project and the Networks Against School Shootings Project (NETWASS. New Directions for Youth Development, 2011: (129), 61–78. doi: 10.1002/yd.387 |

25 | Leuschner, V. , Fiedler, N. , Schultze, M. , Ahlig, N. , Göbel, K. , Sommer, F. , Scholl, J. , Cornell, D. , & Scheithauer, H. ((2017) ). Prevention of targeted school violence by responding to students’ psychosocial crises: The NETWASS program. Child Development, 88: , 68–82. doi: 10.1111/cdev.12690 |

26 | Mowbray, C. T. , Holter, M. C. , Teague, G. B. , & Bybee, D. ((2003) ). Fidelity criteria: Development, measurement, and validation. American Journal of Evaluation, 24: , 315–340. doi: 10.1177/109821400302400303 |

27 | Newman, K. S. , Fox, C. , Roth, W. , Mehta, J. , & Harding, D. ((2005) ). Rampage: The social roots of school shootings. NewYork, NY: Basic Books. |

28 | O’Toole, M. E. ((1999) ). The school shooter: A threat assessment perspective. Quantico, VA: National Center for the Analysis of Violent Crime, Federal Bureau of Investigation. |

29 | Payne, A. A. , Gottfredson, D. C. , & Gottfredson, G. D. ((2006) ). School predictors of the intensity of implementation of school-based prevention programs: Results from a national study. Prevention Science, 7: , 225–237. doi: 10.1007/s11121-006-0029-2 |

30 | Scheithauer, H. , Leuschner, V. , NETWASS Research Group ((2014) ). Krisenprävention in der Schule. Das NETWASS-Programmzur frühen Prävention schwerer Schulgewalt [Crisis prevention in schools. The NETWASS program for early prevention of severe school violence]. Stuttgart, Germany: Kohlhammer. |

31 | Slaughter, S. E. , Hill, J. N. , & Snelgrove-Clarke, E. ((2015) ). What is the extent and quality of documentation and reporting of fidelity to implementation strategies: A scoping review. Implementation Science, 10: , 129. doi: 10.1186/s13012-015-0320-3 |

32 | Sommer, F. , Leuschner, V. , Fiedler, N. , Madfis, E. , & Scheithauer, H. ((2020) ). The role of shame in developmental trajectories towards severe targeted school violence: An in-depth multiple case study. Aggression and Violent Behavior, 51: , 101386. doi: 10.1016/j.avb.2020.101386 |

33 | Sommer, F. , Leuschner, V. , & Scheithauer, H. ((2014) ). Bullying, romantic rejection, and conflicts with teachers: The crucial role of social dynamics in the development of school shootings–A systematic review. International Journal of Developmental Science, 8: , 3–24. doi: 10.3233/DEV-140129 |

34 | Verlinden, S. , Hersen, M. , & Thomas, J. ((2000) ). Risk factors in school shootings. Clinical Psychology Review, 20: , 3–56. doi: 10.1016/S0272-7358(99)00055-0 |

35 | Vossekuil, B. ((2002) ). The final report and findings of the Safe School Initiative: Implications for the prevention of school attacks in the United States. Washington, DC: US Secret Service and US Department of Education. |

36 | Wandersman, A. , Duffy, J. , Flaspohler, P. , Noonan, R. , Lubell, K. , Stillman, L. ,... Saul, J. ((2008) ). Bridging the gap between prevention research and practice: The interactive systems framework for dissemination and implementation. American Journal of Community Psychology, 41: , 171–181. doi: 10.1007/s10464-008-9174-z |

37 | Webster-Stratton, C. , & Herman, K. C. ((2010) ). Disseminating Incredible Years Series early-intervention programs: Integrating and sustaining services between school and home. Psychology in the Schools, 47: , 36–54. doi: 10.1002/pits.204 |